mirror of

https://github.com/hibiken/asynq.git

synced 2025-06-07 23:32:57 +08:00

Compare commits

No commits in common. "master" and "v0.9.2" have entirely different histories.

4

.github/FUNDING.yml

vendored

4

.github/FUNDING.yml

vendored

@ -1,4 +0,0 @@

|

||||

# These are supported funding model platforms

|

||||

|

||||

github: [hibiken]

|

||||

open_collective: ken-hibino

|

||||

15

.github/ISSUE_TEMPLATE/bug_report.md

vendored

15

.github/ISSUE_TEMPLATE/bug_report.md

vendored

@ -3,20 +3,13 @@ name: Bug report

|

||||

about: Create a report to help us improve

|

||||

title: "[BUG] Description of the bug"

|

||||

labels: bug

|

||||

assignees:

|

||||

- hibiken

|

||||

- kamikazechaser

|

||||

|

||||

assignees: hibiken

|

||||

|

||||

---

|

||||

|

||||

**Describe the bug**

|

||||

A clear and concise description of what the bug is.

|

||||

|

||||

**Environment (please complete the following information):**

|

||||

- OS: [e.g. MacOS, Linux]

|

||||

- `asynq` package version [e.g. v0.25.0]

|

||||

- Redis/Valkey version

|

||||

|

||||

**To Reproduce**

|

||||

Steps to reproduce the behavior (Code snippets if applicable):

|

||||

1. Setup background processing ...

|

||||

@ -29,5 +22,9 @@ A clear and concise description of what you expected to happen.

|

||||

**Screenshots**

|

||||

If applicable, add screenshots to help explain your problem.

|

||||

|

||||

**Environment (please complete the following information):**

|

||||

- OS: [e.g. MacOS, Linux]

|

||||

- Version of `asynq` package [e.g. v1.0.0]

|

||||

|

||||

**Additional context**

|

||||

Add any other context about the problem here.

|

||||

|

||||

4

.github/ISSUE_TEMPLATE/feature_request.md

vendored

4

.github/ISSUE_TEMPLATE/feature_request.md

vendored

@ -3,9 +3,7 @@ name: Feature request

|

||||

about: Suggest an idea for this project

|

||||

title: "[FEATURE REQUEST] Description of the feature request"

|

||||

labels: enhancement

|

||||

assignees:

|

||||

- hibiken

|

||||

- kamikazechaser

|

||||

assignees: hibiken

|

||||

|

||||

---

|

||||

|

||||

|

||||

24

.github/dependabot.yaml

vendored

24

.github/dependabot.yaml

vendored

@ -1,24 +0,0 @@

|

||||

version: 2

|

||||

updates:

|

||||

- package-ecosystem: "gomod"

|

||||

directory: "/"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

labels:

|

||||

- "pr-deps"

|

||||

- package-ecosystem: "gomod"

|

||||

directory: "/tools"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

labels:

|

||||

- "pr-deps"

|

||||

- package-ecosystem: "gomod"

|

||||

directory: "/x"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

labels:

|

||||

- "pr-deps"

|

||||

- package-ecosystem: "github-actions"

|

||||

directory: "/"

|

||||

schedule:

|

||||

interval: "weekly"

|

||||

82

.github/workflows/benchstat.yml

vendored

82

.github/workflows/benchstat.yml

vendored

@ -1,82 +0,0 @@

|

||||

# This workflow runs benchmarks against the current branch,

|

||||

# compares them to benchmarks against master,

|

||||

# and uploads the results as an artifact.

|

||||

|

||||

name: benchstat

|

||||

|

||||

on: [pull_request]

|

||||

|

||||

jobs:

|

||||

incoming:

|

||||

runs-on: ubuntu-latest

|

||||

services:

|

||||

redis:

|

||||

image: redis:7

|

||||

ports:

|

||||

- 6379:6379

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: 1.23.x

|

||||

- name: Benchmark

|

||||

run: go test -run=^$ -bench=. -count=5 -timeout=60m ./... | tee -a new.txt

|

||||

- name: Upload Benchmark

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: bench-incoming

|

||||

path: new.txt

|

||||

|

||||

current:

|

||||

runs-on: ubuntu-latest

|

||||

services:

|

||||

redis:

|

||||

image: redis:7

|

||||

ports:

|

||||

- 6379:6379

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

ref: master

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: 1.23.x

|

||||

- name: Benchmark

|

||||

run: go test -run=^$ -bench=. -count=5 -timeout=60m ./... | tee -a old.txt

|

||||

- name: Upload Benchmark

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: bench-current

|

||||

path: old.txt

|

||||

|

||||

benchstat:

|

||||

needs: [incoming, current]

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: 1.23.x

|

||||

- name: Install benchstat

|

||||

run: go get -u golang.org/x/perf/cmd/benchstat

|

||||

- name: Download Incoming

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: bench-incoming

|

||||

- name: Download Current

|

||||

uses: actions/download-artifact@v4

|

||||

with:

|

||||

name: bench-current

|

||||

- name: Benchstat Results

|

||||

run: benchstat old.txt new.txt | tee -a benchstat.txt

|

||||

- name: Upload benchstat results

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: benchstat

|

||||

path: benchstat.txt

|

||||

83

.github/workflows/build.yml

vendored

83

.github/workflows/build.yml

vendored

@ -1,83 +0,0 @@

|

||||

name: build

|

||||

|

||||

on: [push, pull_request]

|

||||

|

||||

jobs:

|

||||

build:

|

||||

strategy:

|

||||

matrix:

|

||||

os: [ubuntu-latest]

|

||||

go-version: [1.22.x, 1.23.x]

|

||||

runs-on: ${{ matrix.os }}

|

||||

services:

|

||||

redis:

|

||||

image: redis:7

|

||||

ports:

|

||||

- 6379:6379

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: ${{ matrix.go-version }}

|

||||

cache: false

|

||||

|

||||

- name: Build core module

|

||||

run: go build -v ./...

|

||||

|

||||

- name: Build x module

|

||||

run: cd x && go build -v ./... && cd ..

|

||||

|

||||

- name: Test core module

|

||||

run: go test -race -v -coverprofile=coverage.txt -covermode=atomic ./...

|

||||

|

||||

- name: Test x module

|

||||

run: cd x && go test -race -v ./... && cd ..

|

||||

|

||||

- name: Benchmark Test

|

||||

run: go test -run=^$ -bench=. -loglevel=debug ./...

|

||||

|

||||

- name: Upload coverage to Codecov

|

||||

uses: codecov/codecov-action@v5

|

||||

|

||||

build-tool:

|

||||

strategy:

|

||||

matrix:

|

||||

os: [ubuntu-latest]

|

||||

go-version: [1.22.x, 1.23.x]

|

||||

runs-on: ${{ matrix.os }}

|

||||

services:

|

||||

redis:

|

||||

image: redis:7

|

||||

ports:

|

||||

- 6379:6379

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

|

||||

- name: Set up Go

|

||||

uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: ${{ matrix.go-version }}

|

||||

cache: false

|

||||

|

||||

- name: Build tools module

|

||||

run: cd tools && go build -v ./... && cd ..

|

||||

|

||||

- name: Test tools module

|

||||

run: cd tools && go test -race -v ./... && cd ..

|

||||

|

||||

golangci:

|

||||

name: lint

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

|

||||

- uses: actions/setup-go@v5

|

||||

with:

|

||||

go-version: stable

|

||||

|

||||

- name: golangci-lint

|

||||

uses: golangci/golangci-lint-action@v6

|

||||

with:

|

||||

version: v1.61

|

||||

10

.gitignore

vendored

10

.gitignore

vendored

@ -1,4 +1,3 @@

|

||||

vendor

|

||||

# Binaries for programs and plugins

|

||||

*.exe

|

||||

*.exe~

|

||||

@ -15,13 +14,8 @@ vendor

|

||||

# Ignore examples for now

|

||||

/examples

|

||||

|

||||

# Ignore tool binaries

|

||||

# Ignore command binary

|

||||

/tools/asynq/asynq

|

||||

/tools/metrics_exporter/metrics_exporter

|

||||

|

||||

# Ignore asynq config file

|

||||

.asynq.*

|

||||

|

||||

# Ignore editor config files

|

||||

.vscode

|

||||

.idea

|

||||

.asynq.*

|

||||

13

.travis.yml

Normal file

13

.travis.yml

Normal file

@ -0,0 +1,13 @@

|

||||

language: go

|

||||

go_import_path: github.com/hibiken/asynq

|

||||

git:

|

||||

depth: 1

|

||||

go: [1.13.x, 1.14.x]

|

||||

script:

|

||||

- go test -race -v -coverprofile=coverage.txt -covermode=atomic ./...

|

||||

- go test -run=XXX -bench=. -loglevel=debug ./...

|

||||

services:

|

||||

- redis-server

|

||||

after_success:

|

||||

- bash ./.travis/benchcmp.sh

|

||||

- bash <(curl -s https://codecov.io/bash)

|

||||

18

.travis/benchcmp.sh

Executable file

18

.travis/benchcmp.sh

Executable file

@ -0,0 +1,18 @@

|

||||

if [ "${TRAVIS_PULL_REQUEST_BRANCH:-$TRAVIS_BRANCH}" != "master" ]; then

|

||||

REMOTE_URL="$(git config --get remote.origin.url)";

|

||||

cd ${TRAVIS_BUILD_DIR}/.. && \

|

||||

git clone ${REMOTE_URL} "${TRAVIS_REPO_SLUG}-bench" && \

|

||||

cd "${TRAVIS_REPO_SLUG}-bench" && \

|

||||

|

||||

# Benchmark master

|

||||

git checkout master && \

|

||||

go test -run=XXX -bench=. ./... > master.txt && \

|

||||

|

||||

# Benchmark feature branch

|

||||

git checkout ${TRAVIS_COMMIT} && \

|

||||

go test -run=XXX -bench=. ./... > feature.txt && \

|

||||

|

||||

# compare two benchmarks

|

||||

go get -u golang.org/x/tools/cmd/benchcmp && \

|

||||

benchcmp master.txt feature.txt;

|

||||

fi

|

||||

404

CHANGELOG.md

404

CHANGELOG.md

@ -7,410 +7,6 @@ and this project adheres to [Semantic Versioning](https://semver.org/spec/v2.0.0

|

||||

|

||||

## [Unreleased]

|

||||

|

||||

## [0.25.1] - 2024-12-11

|

||||

|

||||

### Upgrades

|

||||

|

||||

* Some packages

|

||||

|

||||

### Added

|

||||

|

||||

* Add `HeartbeatInterval` option to the scheduler (PR: https://github.com/hibiken/asynq/pull/956)

|

||||

* Add `RedisUniversalClient` support to periodic task manager (PR: https://github.com/hibiken/asynq/pull/958)

|

||||

* Add `--insecure` flag to CLI dash command (PR: https://github.com/hibiken/asynq/pull/980)

|

||||

* Add logging for registration errors (PR: https://github.com/hibiken/asynq/pull/657)

|

||||

|

||||

### Fixes

|

||||

- Perf: Use string concat inplace of fmt.Sprintf in hotpath (PR: https://github.com/hibiken/asynq/pull/962)

|

||||

- Perf: Init map with size (PR: https://github.com/hibiken/asynq/pull/673)

|

||||

- Fix: `Scheduler` and `PeriodicTaskManager` graceful shutdown (PR: https://github.com/hibiken/asynq/pull/977)

|

||||

- Fix: `Server` graceful shutdown on UNIX systems (PR: https://github.com/hibiken/asynq/pull/982)

|

||||

|

||||

## [0.25.0] - 2024-10-29

|

||||

|

||||

### Upgrades

|

||||

- Minumum go version is set to 1.22 (PR: https://github.com/hibiken/asynq/pull/925)

|

||||

- Internal protobuf package is upgraded to address security advisories (PR: https://github.com/hibiken/asynq/pull/925)

|

||||

- Most packages are upgraded

|

||||

- CI/CD spec upgraded

|

||||

|

||||

### Added

|

||||

- `IsPanicError` function is introduced to support catching of panic errors when processing tasks (PR: https://github.com/hibiken/asynq/pull/491)

|

||||

- `JanitorInterval` and `JanitorBatchSize` are added as Server options (PR: https://github.com/hibiken/asynq/pull/715)

|

||||

- `NewClientFromRedisClient` is introduced to allow reusing an existing redis client (PR: https://github.com/hibiken/asynq/pull/742)

|

||||

- `TaskCheckInterval` config option is added to specify the interval between checks for new tasks to process when all queues are empty (PR: https://github.com/hibiken/asynq/pull/694)

|

||||

- `Ping` method is added to Client, Server and Scheduler ((PR: https://github.com/hibiken/asynq/pull/585))

|

||||

- `RevokeTask` error type is introduced to prevent a task from being retried or archived (PR: https://github.com/hibiken/asynq/pull/882)

|

||||

- `SentinelUsername` is added as a redis config option (PR: https://github.com/hibiken/asynq/pull/924)

|

||||

- Some jitter is introduced to improve latency when fetching jobs in the processor (PR: https://github.com/hibiken/asynq/pull/868)

|

||||

- Add task enqueue command to the CLI (PR: https://github.com/hibiken/asynq/pull/918)

|

||||

- Add a map cache (concurrent safe) to keep track of queues that ultimately reduces redis load when enqueuing tasks (PR: https://github.com/hibiken/asynq/pull/946)

|

||||

|

||||

### Fixes

|

||||

- Archived tasks that are trimmed should now be deleted (PR: https://github.com/hibiken/asynq/pull/743)

|

||||

- Fix lua script when listing task messages with an expired lease (PR: https://github.com/hibiken/asynq/pull/709)

|

||||

- Fix potential context leaks due to cancellation not being called (PR: https://github.com/hibiken/asynq/pull/926)

|

||||

- Misc documentation fixes

|

||||

- Misc test fixes

|

||||

|

||||

|

||||

## [0.24.1] - 2023-05-01

|

||||

|

||||

### Changed

|

||||

- Updated package version dependency for go-redis

|

||||

|

||||

## [0.24.0] - 2023-01-02

|

||||

|

||||

### Added

|

||||

- `PreEnqueueFunc`, `PostEnqueueFunc` is added in `Scheduler` and deprecated `EnqueueErrorHandler` (PR: https://github.com/hibiken/asynq/pull/476)

|

||||

|

||||

### Changed

|

||||

- Removed error log when `Scheduler` failed to enqueue a task. Use `PostEnqueueFunc` to check for errors and task actions if needed.

|

||||

- Changed log level from ERROR to WARNINING when `Scheduler` failed to record `SchedulerEnqueueEvent`.

|

||||

|

||||

## [0.23.0] - 2022-04-11

|

||||

|

||||

### Added

|

||||

|

||||

- `Group` option is introduced to enqueue task in a group.

|

||||

- `GroupAggregator` and related types are introduced for task aggregation feature.

|

||||

- `GroupGracePeriod`, `GroupMaxSize`, `GroupMaxDelay`, and `GroupAggregator` fields are added to `Config`.

|

||||

- `Inspector` has new methods related to "aggregating tasks".

|

||||

- `Group` field is added to `TaskInfo`.

|

||||

- (CLI): `group ls` command is added

|

||||

- (CLI): `task ls` supports listing aggregating tasks via `--state=aggregating --group=<GROUP>` flags

|

||||

- Enable rediss url parsing support

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fixed overflow issue with 32-bit systems (For details, see https://github.com/hibiken/asynq/pull/426)

|

||||

|

||||

## [0.22.1] - 2022-02-20

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fixed Redis version compatibility: Keep support for redis v4.0+

|

||||

|

||||

## [0.22.0] - 2022-02-19

|

||||

|

||||

### Added

|

||||

|

||||

- `BaseContext` is introduced in `Config` to specify callback hook to provide a base `context` from which `Handler` `context` is derived

|

||||

- `IsOrphaned` field is added to `TaskInfo` to describe a task left in active state with no worker processing it.

|

||||

|

||||

### Changed

|

||||

|

||||

- `Server` now recovers tasks with an expired lease. Recovered tasks are retried/archived with `ErrLeaseExpired` error.

|

||||

|

||||

## [0.21.0] - 2022-01-22

|

||||

|

||||

### Added

|

||||

|

||||

- `PeriodicTaskManager` is added. Prefer using this over `Scheduler` as it has better support for dynamic periodic tasks.

|

||||

- The `asynq stats` command now supports a `--json` option, making its output a JSON object

|

||||

- Introduced new configuration for `DelayedTaskCheckInterval`. See [godoc](https://godoc.org/github.com/hibiken/asynq) for more details.

|

||||

|

||||

## [0.20.0] - 2021-12-19

|

||||

|

||||

### Added

|

||||

|

||||

- Package `x/metrics` is added.

|

||||

- Tool `tools/metrics_exporter` binary is added.

|

||||

- `ProcessedTotal` and `FailedTotal` fields were added to `QueueInfo` struct.

|

||||

|

||||

## [0.19.1] - 2021-12-12

|

||||

|

||||

### Added

|

||||

|

||||

- `Latency` field is added to `QueueInfo`.

|

||||

- `EnqueueContext` method is added to `Client`.

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fixed an error when user pass a duration less than 1s to `Unique` option

|

||||

|

||||

## [0.19.0] - 2021-11-06

|

||||

|

||||

### Changed

|

||||

|

||||

- `NewTask` takes `Option` as variadic argument

|

||||

- Bumped minimum supported go version to 1.14 (i.e. go1.14 or higher is required).

|

||||

|

||||

### Added

|

||||

|

||||

- `Retention` option is added to allow user to specify task retention duration after completion.

|

||||

- `TaskID` option is added to allow user to specify task ID.

|

||||

- `ErrTaskIDConflict` sentinel error value is added.

|

||||

- `ResultWriter` type is added and provided through `Task.ResultWriter` method.

|

||||

- `TaskInfo` has new fields `CompletedAt`, `Result` and `Retention`.

|

||||

|

||||

### Removed

|

||||

|

||||

- `Client.SetDefaultOptions` is removed. Use `NewTask` instead to pass default options for tasks.

|

||||

|

||||

## [0.18.6] - 2021-10-03

|

||||

|

||||

### Changed

|

||||

|

||||

- Updated `github.com/go-redis/redis` package to v8

|

||||

|

||||

## [0.18.5] - 2021-09-01

|

||||

|

||||

### Added

|

||||

|

||||

- `IsFailure` config option is added to determine whether error returned from Handler counts as a failure.

|

||||

|

||||

## [0.18.4] - 2021-08-17

|

||||

|

||||

### Fixed

|

||||

|

||||

- Scheduler methods are now thread-safe. It's now safe to call `Register` and `Unregister` concurrently.

|

||||

|

||||

## [0.18.3] - 2021-08-09

|

||||

|

||||

### Changed

|

||||

|

||||

- `Client.Enqueue` no longer enqueues tasks with empty typename; Error message is returned.

|

||||

|

||||

## [0.18.2] - 2021-07-15

|

||||

|

||||

### Changed

|

||||

|

||||

- Changed `Queue` function to not to convert the provided queue name to lowercase. Queue names are now case-sensitive.

|

||||

- `QueueInfo.MemoryUsage` is now an approximate usage value.

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fixed latency issue around memory usage (see https://github.com/hibiken/asynq/issues/309).

|

||||

|

||||

## [0.18.1] - 2021-07-04

|

||||

|

||||

### Changed

|

||||

|

||||

- Changed to execute task recovering logic when server starts up; Previously it needed to wait for a minute for task recovering logic to exeucte.

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fixed task recovering logic to execute every minute

|

||||

|

||||

## [0.18.0] - 2021-06-29

|

||||

|

||||

### Changed

|

||||

|

||||

- NewTask function now takes array of bytes as payload.

|

||||

- Task `Type` and `Payload` should be accessed by a method call.

|

||||

- `Server` API has changed. Renamed `Quiet` to `Stop`. Renamed `Stop` to `Shutdown`. _Note:_ As a result of this renaming, the behavior of `Stop` has changed. Please update the exising code to call `Shutdown` where it used to call `Stop`.

|

||||

- `Scheduler` API has changed. Renamed `Stop` to `Shutdown`.

|

||||

- Requires redis v4.0+ for multiple field/value pair support

|

||||

- `Client.Enqueue` now returns `TaskInfo`

|

||||

- `Inspector.RunTaskByKey` is replaced with `Inspector.RunTask`

|

||||

- `Inspector.DeleteTaskByKey` is replaced with `Inspector.DeleteTask`

|

||||

- `Inspector.ArchiveTaskByKey` is replaced with `Inspector.ArchiveTask`

|

||||

- `inspeq` package is removed. All types and functions from the package is moved to `asynq` package.

|

||||

- `WorkerInfo` field names have changed.

|

||||

- `Inspector.CancelActiveTask` is renamed to `Inspector.CancelProcessing`

|

||||

|

||||

## [0.17.2] - 2021-06-06

|

||||

|

||||

### Fixed

|

||||

|

||||

- Free unique lock when task is deleted (https://github.com/hibiken/asynq/issues/275).

|

||||

|

||||

## [0.17.1] - 2021-04-04

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fix bug in internal `RDB.memoryUsage` method.

|

||||

|

||||

## [0.17.0] - 2021-03-24

|

||||

|

||||

### Added

|

||||

|

||||

- `DialTimeout`, `ReadTimeout`, and `WriteTimeout` options are added to `RedisConnOpt`.

|

||||

|

||||

## [0.16.1] - 2021-03-20

|

||||

|

||||

### Fixed

|

||||

|

||||

- Replace `KEYS` command with `SCAN` as recommended by [redis doc](https://redis.io/commands/KEYS).

|

||||

|

||||

## [0.16.0] - 2021-03-10

|

||||

|

||||

### Added

|

||||

|

||||

- `Unregister` method is added to `Scheduler` to remove a registered entry.

|

||||

|

||||

## [0.15.0] - 2021-01-31

|

||||

|

||||

**IMPORTATNT**: All `Inspector` related code are moved to subpackage "github.com/hibiken/asynq/inspeq"

|

||||

|

||||

### Changed

|

||||

|

||||

- `Inspector` related code are moved to subpackage "github.com/hibken/asynq/inspeq".

|

||||

- `RedisConnOpt` interface has changed slightly. If you have been passing `RedisClientOpt`, `RedisFailoverClientOpt`, or `RedisClusterClientOpt` as a pointer,

|

||||

update your code to pass as a value.

|

||||

- `ErrorMsg` field in `RetryTask` and `ArchivedTask` was renamed to `LastError`.

|

||||

|

||||

### Added

|

||||

|

||||

- `MaxRetry`, `Retried`, `LastError` fields were added to all task types returned from `Inspector`.

|

||||

- `MemoryUsage` field was added to `QueueStats`.

|

||||

- `DeleteAllPendingTasks`, `ArchiveAllPendingTasks` were added to `Inspector`

|

||||

- `DeleteTaskByKey` and `ArchiveTaskByKey` now supports deleting/archiving `PendingTask`.

|

||||

- asynq CLI now supports deleting/archiving pending tasks.

|

||||

|

||||

## [0.14.1] - 2021-01-19

|

||||

|

||||

### Fixed

|

||||

|

||||

- `go.mod` file for CLI

|

||||

|

||||

## [0.14.0] - 2021-01-14

|

||||

|

||||

**IMPORTATNT**: Please run `asynq migrate` command to migrate from the previous versions.

|

||||

|

||||

### Changed

|

||||

|

||||

- Renamed `DeadTask` to `ArchivedTask`.

|

||||

- Renamed the operation `Kill` to `Archive` in `Inpsector`.

|

||||

- Print stack trace when Handler panics.

|

||||

- Include a file name and a line number in the error message when recovering from a panic.

|

||||

|

||||

### Added

|

||||

|

||||

- `DefaultRetryDelayFunc` is now a public API, which can be used in the custom `RetryDelayFunc`.

|

||||

- `SkipRetry` error is added to be used as a return value from `Handler`.

|

||||

- `Servers` method is added to `Inspector`

|

||||

- `CancelActiveTask` method is added to `Inspector`.

|

||||

- `ListSchedulerEnqueueEvents` method is added to `Inspector`.

|

||||

- `SchedulerEntries` method is added to `Inspector`.

|

||||

- `DeleteQueue` method is added to `Inspector`.

|

||||

|

||||

## [0.13.1] - 2020-11-22

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fixed processor to wait for specified time duration before forcefully shutdown workers.

|

||||

|

||||

## [0.13.0] - 2020-10-13

|

||||

|

||||

### Added

|

||||

|

||||

- `Scheduler` type is added to enable periodic tasks. See the godoc for its APIs and [wiki](https://github.com/hibiken/asynq/wiki/Periodic-Tasks) for the getting-started guide.

|

||||

|

||||

### Changed

|

||||

|

||||

- interface `Option` has changed. See the godoc for the new interface.

|

||||

This change would have no impact as long as you are using exported functions (e.g. `MaxRetry`, `Queue`, etc)

|

||||

to create `Option`s.

|

||||

|

||||

### Added

|

||||

|

||||

- `Payload.String() string` method is added

|

||||

- `Payload.MarshalJSON() ([]byte, error)` method is added

|

||||

|

||||

## [0.12.0] - 2020-09-12

|

||||

|

||||

**IMPORTANT**: If you are upgrading from a previous version, please install the latest version of the CLI `go get -u github.com/hibiken/asynq/tools/asynq` and run `asynq migrate` command. No process should be writing to Redis while you run the migration command.

|

||||

|

||||

## The semantics of queue have changed

|

||||

|

||||

Previously, we called tasks that are ready to be processed _"Enqueued tasks"_, and other tasks that are scheduled to be processed in the future _"Scheduled tasks"_, etc.

|

||||

We changed the semantics of _"Enqueue"_ slightly; All tasks that client pushes to Redis are _Enqueued_ to a queue. Within a queue, tasks will transition from one state to another.

|

||||

Possible task states are:

|

||||

|

||||

- `Pending`: task is ready to be processed (previously called "Enqueued")

|

||||

- `Active`: tasks is currently being processed (previously called "InProgress")

|

||||

- `Scheduled`: task is scheduled to be processed in the future

|

||||

- `Retry`: task failed to be processed and will be retried again in the future

|

||||

- `Dead`: task has exhausted all of its retries and stored for manual inspection purpose

|

||||

|

||||

**These semantics change is reflected in the new `Inspector` API and CLI commands.**

|

||||

|

||||

---

|

||||

|

||||

### Changed

|

||||

|

||||

#### `Client`

|

||||

|

||||

Use `ProcessIn` or `ProcessAt` option to schedule a task instead of `EnqueueIn` or `EnqueueAt`.

|

||||

|

||||

| Previously | v0.12.0 |

|

||||

| --------------------------- | ------------------------------------------ |

|

||||

| `client.EnqueueAt(t, task)` | `client.Enqueue(task, asynq.ProcessAt(t))` |

|

||||

| `client.EnqueueIn(d, task)` | `client.Enqueue(task, asynq.ProcessIn(d))` |

|

||||

|

||||

#### `Inspector`

|

||||

|

||||

All Inspector methods are scoped to a queue, and the methods take `qname (string)` as the first argument.

|

||||

`EnqueuedTask` is renamed to `PendingTask` and its corresponding methods.

|

||||

`InProgressTask` is renamed to `ActiveTask` and its corresponding methods.

|

||||

Command "Enqueue" is replaced by the verb "Run" (e.g. `EnqueueAllScheduledTasks` --> `RunAllScheduledTasks`)

|

||||

|

||||

#### `CLI`

|

||||

|

||||

CLI commands are restructured to use subcommands. Commands are organized into a few management commands:

|

||||

To view details on any command, use `asynq help <command> <subcommand>`.

|

||||

|

||||

- `asynq stats`

|

||||

- `asynq queue [ls inspect history rm pause unpause]`

|

||||

- `asynq task [ls cancel delete kill run delete-all kill-all run-all]`

|

||||

- `asynq server [ls]`

|

||||

|

||||

### Added

|

||||

|

||||

#### `RedisConnOpt`

|

||||

|

||||

- `RedisClusterClientOpt` is added to connect to Redis Cluster.

|

||||

- `Username` field is added to all `RedisConnOpt` types in order to authenticate connection when Redis ACLs are used.

|

||||

|

||||

#### `Client`

|

||||

|

||||

- `ProcessIn(d time.Duration) Option` and `ProcessAt(t time.Time) Option` are added to replace `EnqueueIn` and `EnqueueAt` functionality.

|

||||

|

||||

#### `Inspector`

|

||||

|

||||

- `Queues() ([]string, error)` method is added to get all queue names.

|

||||

- `ClusterKeySlot(qname string) (int64, error)` method is added to get queue's hash slot in Redis cluster.

|

||||

- `ClusterNodes(qname string) ([]ClusterNode, error)` method is added to get a list of Redis cluster nodes for the given queue.

|

||||

- `Close() error` method is added to close connection with redis.

|

||||

|

||||

### `Handler`

|

||||

|

||||

- `GetQueueName(ctx context.Context) (string, bool)` helper is added to extract queue name from a context.

|

||||

|

||||

## [0.11.0] - 2020-07-28

|

||||

|

||||

### Added

|

||||

|

||||

- `Inspector` type was added to monitor and mutate state of queues and tasks.

|

||||

- `HealthCheckFunc` and `HealthCheckInterval` fields were added to `Config` to allow user to specify a callback

|

||||

function to check for broker connection.

|

||||

|

||||

## [0.10.0] - 2020-07-06

|

||||

|

||||

### Changed

|

||||

|

||||

- All tasks now requires timeout or deadline. By default, timeout is set to 30 mins.

|

||||

- Tasks that exceed its deadline are automatically retried.

|

||||

- Encoding schema for task message has changed. Please install the latest CLI and run `migrate` command if

|

||||

you have tasks enqueued with the previous version of asynq.

|

||||

- API of `(*Client).Enqueue`, `(*Client).EnqueueIn`, and `(*Client).EnqueueAt` has changed to return a `*Result`.

|

||||

- API of `ErrorHandler` has changed. It now takes context as the first argument and removed `retried`, `maxRetry` from the argument list.

|

||||

Use `GetRetryCount` and/or `GetMaxRetry` to get the count values.

|

||||

|

||||

## [0.9.4] - 2020-06-13

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fixes issue of same tasks processed by more than one worker (https://github.com/hibiken/asynq/issues/90).

|

||||

|

||||

## [0.9.3] - 2020-06-12

|

||||

|

||||

### Fixed

|

||||

|

||||

- Fixes the JSON number overflow issue (https://github.com/hibiken/asynq/issues/166).

|

||||

|

||||

## [0.9.2] - 2020-06-08

|

||||

|

||||

### Added

|

||||

|

||||

@ -1,128 +0,0 @@

|

||||

# Contributor Covenant Code of Conduct

|

||||

|

||||

## Our Pledge

|

||||

|

||||

We as members, contributors, and leaders pledge to make participation in our

|

||||

community a harassment-free experience for everyone, regardless of age, body

|

||||

size, visible or invisible disability, ethnicity, sex characteristics, gender

|

||||

identity and expression, level of experience, education, socio-economic status,

|

||||

nationality, personal appearance, race, religion, or sexual identity

|

||||

and orientation.

|

||||

|

||||

We pledge to act and interact in ways that contribute to an open, welcoming,

|

||||

diverse, inclusive, and healthy community.

|

||||

|

||||

## Our Standards

|

||||

|

||||

Examples of behavior that contributes to a positive environment for our

|

||||

community include:

|

||||

|

||||

* Demonstrating empathy and kindness toward other people

|

||||

* Being respectful of differing opinions, viewpoints, and experiences

|

||||

* Giving and gracefully accepting constructive feedback

|

||||

* Accepting responsibility and apologizing to those affected by our mistakes,

|

||||

and learning from the experience

|

||||

* Focusing on what is best not just for us as individuals, but for the

|

||||

overall community

|

||||

|

||||

Examples of unacceptable behavior include:

|

||||

|

||||

* The use of sexualized language or imagery, and sexual attention or

|

||||

advances of any kind

|

||||

* Trolling, insulting or derogatory comments, and personal or political attacks

|

||||

* Public or private harassment

|

||||

* Publishing others' private information, such as a physical or email

|

||||

address, without their explicit permission

|

||||

* Other conduct which could reasonably be considered inappropriate in a

|

||||

professional setting

|

||||

|

||||

## Enforcement Responsibilities

|

||||

|

||||

Community leaders are responsible for clarifying and enforcing our standards of

|

||||

acceptable behavior and will take appropriate and fair corrective action in

|

||||

response to any behavior that they deem inappropriate, threatening, offensive,

|

||||

or harmful.

|

||||

|

||||

Community leaders have the right and responsibility to remove, edit, or reject

|

||||

comments, commits, code, wiki edits, issues, and other contributions that are

|

||||

not aligned to this Code of Conduct, and will communicate reasons for moderation

|

||||

decisions when appropriate.

|

||||

|

||||

## Scope

|

||||

|

||||

This Code of Conduct applies within all community spaces, and also applies when

|

||||

an individual is officially representing the community in public spaces.

|

||||

Examples of representing our community include using an official e-mail address,

|

||||

posting via an official social media account, or acting as an appointed

|

||||

representative at an online or offline event.

|

||||

|

||||

## Enforcement

|

||||

|

||||

Instances of abusive, harassing, or otherwise unacceptable behavior may be

|

||||

reported to the community leaders responsible for enforcement at

|

||||

ken.hibino7@gmail.com.

|

||||

All complaints will be reviewed and investigated promptly and fairly.

|

||||

|

||||

All community leaders are obligated to respect the privacy and security of the

|

||||

reporter of any incident.

|

||||

|

||||

## Enforcement Guidelines

|

||||

|

||||

Community leaders will follow these Community Impact Guidelines in determining

|

||||

the consequences for any action they deem in violation of this Code of Conduct:

|

||||

|

||||

### 1. Correction

|

||||

|

||||

**Community Impact**: Use of inappropriate language or other behavior deemed

|

||||

unprofessional or unwelcome in the community.

|

||||

|

||||

**Consequence**: A private, written warning from community leaders, providing

|

||||

clarity around the nature of the violation and an explanation of why the

|

||||

behavior was inappropriate. A public apology may be requested.

|

||||

|

||||

### 2. Warning

|

||||

|

||||

**Community Impact**: A violation through a single incident or series

|

||||

of actions.

|

||||

|

||||

**Consequence**: A warning with consequences for continued behavior. No

|

||||

interaction with the people involved, including unsolicited interaction with

|

||||

those enforcing the Code of Conduct, for a specified period of time. This

|

||||

includes avoiding interactions in community spaces as well as external channels

|

||||

like social media. Violating these terms may lead to a temporary or

|

||||

permanent ban.

|

||||

|

||||

### 3. Temporary Ban

|

||||

|

||||

**Community Impact**: A serious violation of community standards, including

|

||||

sustained inappropriate behavior.

|

||||

|

||||

**Consequence**: A temporary ban from any sort of interaction or public

|

||||

communication with the community for a specified period of time. No public or

|

||||

private interaction with the people involved, including unsolicited interaction

|

||||

with those enforcing the Code of Conduct, is allowed during this period.

|

||||

Violating these terms may lead to a permanent ban.

|

||||

|

||||

### 4. Permanent Ban

|

||||

|

||||

**Community Impact**: Demonstrating a pattern of violation of community

|

||||

standards, including sustained inappropriate behavior, harassment of an

|

||||

individual, or aggression toward or disparagement of classes of individuals.

|

||||

|

||||

**Consequence**: A permanent ban from any sort of public interaction within

|

||||

the community.

|

||||

|

||||

## Attribution

|

||||

|

||||

This Code of Conduct is adapted from the [Contributor Covenant][homepage],

|

||||

version 2.0, available at

|

||||

https://www.contributor-covenant.org/version/2/0/code_of_conduct.html.

|

||||

|

||||

Community Impact Guidelines were inspired by [Mozilla's code of conduct

|

||||

enforcement ladder](https://github.com/mozilla/diversity).

|

||||

|

||||

[homepage]: https://www.contributor-covenant.org

|

||||

|

||||

For answers to common questions about this code of conduct, see the FAQ at

|

||||

https://www.contributor-covenant.org/faq. Translations are available at

|

||||

https://www.contributor-covenant.org/translations.

|

||||

@ -38,14 +38,13 @@ Thank you! We'll try to respond as quickly as possible.

|

||||

## Contributing Code

|

||||

|

||||

1. Fork this repo

|

||||

2. Download your fork `git clone git@github.com:your-username/asynq.git && cd asynq`

|

||||

2. Download your fork `git clone https://github.com/your-username/asynq && cd asynq`

|

||||

3. Create your branch `git checkout -b your-branch-name`

|

||||

4. Make and commit your changes

|

||||

5. Push the branch `git push origin your-branch-name`

|

||||

6. Create a new pull request

|

||||

|

||||

Please try to keep your pull request focused in scope and avoid including unrelated commits.

|

||||

Please run tests against redis cluster locally with `--redis_cluster` flag to ensure that code works for Redis cluster. TODO: Run tests using Redis cluster on CI.

|

||||

|

||||

After you have submitted your pull request, we'll try to get back to you as soon as possible. We may suggest some changes or improvements.

|

||||

|

||||

|

||||

11

Makefile

11

Makefile

@ -1,11 +0,0 @@

|

||||

ROOT_DIR:=$(shell dirname $(realpath $(firstword $(MAKEFILE_LIST))))

|

||||

|

||||

proto: internal/proto/asynq.proto

|

||||

protoc -I=$(ROOT_DIR)/internal/proto \

|

||||

--go_out=$(ROOT_DIR)/internal/proto \

|

||||

--go_opt=module=github.com/hibiken/asynq/internal/proto \

|

||||

$(ROOT_DIR)/internal/proto/asynq.proto

|

||||

|

||||

.PHONY: lint

|

||||

lint:

|

||||

golangci-lint run

|

||||

289

README.md

289

README.md

@ -1,167 +1,143 @@

|

||||

<img src="https://user-images.githubusercontent.com/11155743/114697792-ffbfa580-9d26-11eb-8e5b-33bef69476dc.png" alt="Asynq logo" width="360px" />

|

||||

# Asynq

|

||||

|

||||

# Simple, reliable & efficient distributed task queue in Go

|

||||

|

||||

[](https://godoc.org/github.com/hibiken/asynq)

|

||||

[](https://goreportcard.com/report/github.com/hibiken/asynq)

|

||||

|

||||

[](https://travis-ci.com/hibiken/asynq)

|

||||

[](https://opensource.org/licenses/MIT)

|

||||

[](https://goreportcard.com/report/github.com/hibiken/asynq)

|

||||

[](https://godoc.org/github.com/hibiken/asynq)

|

||||

[](https://gitter.im/go-asynq/community)

|

||||

[](https://codecov.io/gh/hibiken/asynq)

|

||||

|

||||

Asynq is a Go library for queueing tasks and processing them asynchronously with workers. It's backed by [Redis](https://redis.io/) and is designed to be scalable yet easy to get started.

|

||||

## Overview

|

||||

|

||||

Asynq is a Go library for queueing tasks and processing them in the background with workers. It is backed by Redis and it is designed to have a low barrier to entry. It should be integrated in your web stack easily.

|

||||

|

||||

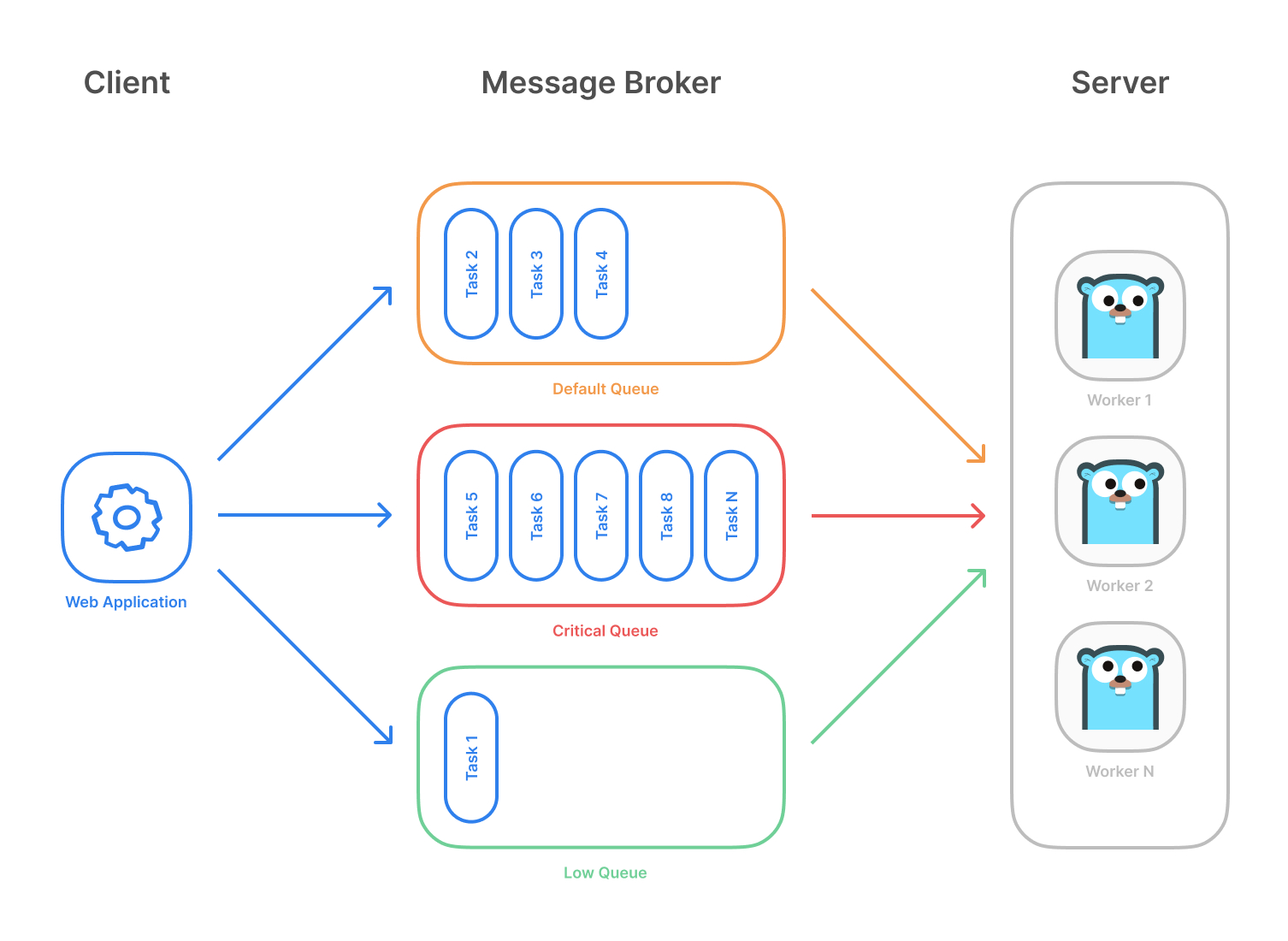

Highlevel overview of how Asynq works:

|

||||

|

||||

- Client puts tasks on a queue

|

||||

- Server pulls tasks off queues and starts a worker goroutine for each task

|

||||

- Client puts task on a queue

|

||||

- Server pulls task off queues and starts a worker goroutine for each task

|

||||

- Tasks are processed concurrently by multiple workers

|

||||

|

||||

Task queues are used as a mechanism to distribute work across multiple machines. A system can consist of multiple worker servers and brokers, giving way to high availability and horizontal scaling.

|

||||

Task queues are used as a mechanism to distribute work across multiple machines.

|

||||

A system can consist of multiple worker servers and brokers, giving way to high availability and horizontal scaling.

|

||||

|

||||

**Example use case**

|

||||

|

||||

|

||||

|

||||

## Stability and Compatibility

|

||||

|

||||

**Important Note**: Current major version is zero (v0.x.x) to accomodate rapid development and fast iteration while getting early feedback from users (Feedback on APIs are appreciated!). The public API could change without a major version update before v1.0.0 release.

|

||||

|

||||

**Status**: The library is currently undergoing heavy development with frequent, breaking API changes.

|

||||

|

||||

## Features

|

||||

|

||||

- Guaranteed [at least one execution](https://www.cloudcomputingpatterns.org/at_least_once_delivery/) of a task

|

||||

- Scheduling of tasks

|

||||

- Durability since tasks are written to Redis

|

||||

- [Retries](https://github.com/hibiken/asynq/wiki/Task-Retry) of failed tasks

|

||||

- Automatic recovery of tasks in the event of a worker crash

|

||||

- [Weighted priority queues](https://github.com/hibiken/asynq/wiki/Queue-Priority#weighted-priority)

|

||||

- [Strict priority queues](https://github.com/hibiken/asynq/wiki/Queue-Priority#strict-priority)

|

||||

- [Weighted priority queues](https://github.com/hibiken/asynq/wiki/Priority-Queues#weighted-priority-queues)

|

||||

- [Strict priority queues](https://github.com/hibiken/asynq/wiki/Priority-Queues#strict-priority-queues)

|

||||

- Low latency to add a task since writes are fast in Redis

|

||||

- De-duplication of tasks using [unique option](https://github.com/hibiken/asynq/wiki/Unique-Tasks)

|

||||

- Allow [timeout and deadline per task](https://github.com/hibiken/asynq/wiki/Task-Timeout-and-Cancelation)

|

||||

- Allow [aggregating group of tasks](https://github.com/hibiken/asynq/wiki/Task-aggregation) to batch multiple successive operations

|

||||

- [Flexible handler interface with support for middlewares](https://github.com/hibiken/asynq/wiki/Handler-Deep-Dive)

|

||||

- [Ability to pause queue](/tools/asynq/README.md#pause) to stop processing tasks from the queue

|

||||

- [Periodic Tasks](https://github.com/hibiken/asynq/wiki/Periodic-Tasks)

|

||||

- [Support Redis Sentinels](https://github.com/hibiken/asynq/wiki/Automatic-Failover) for high availability

|

||||

- Integration with [Prometheus](https://prometheus.io/) to collect and visualize queue metrics

|

||||

- [Web UI](#web-ui) to inspect and remote-control queues and tasks

|

||||

- [Support Redis Sentinels](https://github.com/hibiken/asynq/wiki/Automatic-Failover) for HA

|

||||

- [CLI](#command-line-tool) to inspect and remote-control queues and tasks

|

||||

|

||||

## Stability and Compatibility

|

||||

|

||||

**Status**: The library relatively stable and is currently undergoing **moderate development** with less frequent breaking API changes.

|

||||

|

||||

> ☝️ **Important Note**: Current major version is zero (`v0.x.x`) to accommodate rapid development and fast iteration while getting early feedback from users (_feedback on APIs are appreciated!_). The public API could change without a major version update before `v1.0.0` release.

|

||||

|

||||

### Redis Cluster Compatibility

|

||||

|

||||

Some of the lua scripts in this library may not be compatible with Redis Cluster.

|

||||

|

||||

## Sponsoring

|

||||

If you are using this package in production, **please consider sponsoring the project to show your support!**

|

||||

|

||||

## Quickstart

|

||||

Make sure you have Go installed ([download](https://golang.org/dl/)). The **last two** Go versions are supported (See https://go.dev/dl).

|

||||

|

||||

Initialize your project by creating a folder and then running `go mod init github.com/your/repo` ([learn more](https://blog.golang.org/using-go-modules)) inside the folder. Then install Asynq library with the [`go get`](https://golang.org/cmd/go/#hdr-Add_dependencies_to_current_module_and_install_them) command:

|

||||

First, make sure you are running a Redis server locally.

|

||||

|

||||

```sh

|

||||

go get -u github.com/hibiken/asynq

|

||||

$ redis-server

|

||||

```

|

||||

|

||||

Make sure you're running a Redis server locally or from a [Docker](https://hub.docker.com/_/redis) container. Version `4.0` or higher is required.

|

||||

|

||||

Next, write a package that encapsulates task creation and task handling.

|

||||

|

||||

```go

|

||||

package tasks

|

||||

|

||||

import (

|

||||

"context"

|

||||

"encoding/json"

|

||||

"fmt"

|

||||

"log"

|

||||

"time"

|

||||

|

||||

"github.com/hibiken/asynq"

|

||||

)

|

||||

|

||||

// A list of task types.

|

||||

const (

|

||||

TypeEmailDelivery = "email:deliver"

|

||||

TypeImageResize = "image:resize"

|

||||

EmailDelivery = "email:deliver"

|

||||

ImageProcessing = "image:process"

|

||||

)

|

||||

|

||||

type EmailDeliveryPayload struct {

|

||||

UserID int

|

||||

TemplateID string

|

||||

}

|

||||

|

||||

type ImageResizePayload struct {

|

||||

SourceURL string

|

||||

}

|

||||

|

||||

//----------------------------------------------

|

||||

// Write a function NewXXXTask to create a task.

|

||||

// A task consists of a type and a payload.

|

||||

//----------------------------------------------

|

||||

|

||||

func NewEmailDeliveryTask(userID int, tmplID string) (*asynq.Task, error) {

|

||||

payload, err := json.Marshal(EmailDeliveryPayload{UserID: userID, TemplateID: tmplID})

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

return asynq.NewTask(TypeEmailDelivery, payload), nil

|

||||

func NewEmailDeliveryTask(userID int, tmplID string) *asynq.Task {

|

||||

payload := map[string]interface{}{"user_id": userID, "template_id": tmplID}

|

||||

return asynq.NewTask(EmailDelivery, payload)

|

||||

}

|

||||

|

||||

func NewImageResizeTask(src string) (*asynq.Task, error) {

|

||||

payload, err := json.Marshal(ImageResizePayload{SourceURL: src})

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

// task options can be passed to NewTask, which can be overridden at enqueue time.

|

||||

return asynq.NewTask(TypeImageResize, payload, asynq.MaxRetry(5), asynq.Timeout(20 * time.Minute)), nil

|

||||

func NewImageProcessingTask(src, dst string) *asynq.Task {

|

||||

payload := map[string]interface{}{"src": src, "dst": dst}

|

||||

return asynq.NewTask(ImageProcessing, payload)

|

||||

}

|

||||

|

||||

//---------------------------------------------------------------

|

||||

// Write a function HandleXXXTask to handle the input task.

|

||||

// Note that it satisfies the asynq.HandlerFunc interface.

|

||||

//

|

||||

// Handler doesn't need to be a function. You can define a type

|

||||

//

|

||||

// Handler doesn't need to be a function. You can define a type

|

||||

// that satisfies asynq.Handler interface. See examples below.

|

||||

//---------------------------------------------------------------

|

||||

|

||||

func HandleEmailDeliveryTask(ctx context.Context, t *asynq.Task) error {

|

||||

var p EmailDeliveryPayload

|

||||

if err := json.Unmarshal(t.Payload(), &p); err != nil {

|

||||

return fmt.Errorf("json.Unmarshal failed: %v: %w", err, asynq.SkipRetry)

|

||||

userID, err := t.Payload.GetInt("user_id")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

log.Printf("Sending Email to User: user_id=%d, template_id=%s", p.UserID, p.TemplateID)

|

||||

// Email delivery code ...

|

||||

tmplID, err := t.Payload.GetString("template_id")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

fmt.Printf("Send Email to User: user_id = %d, template_id = %s\n", userID, tmplID)

|

||||

// Email delivery logic ...

|

||||

return nil

|

||||

}

|

||||

|

||||

// ImageProcessor implements asynq.Handler interface.

|

||||

type ImageProcessor struct {

|

||||

type ImageProcesser struct {

|

||||

// ... fields for struct

|

||||

}

|

||||

|

||||

func (processor *ImageProcessor) ProcessTask(ctx context.Context, t *asynq.Task) error {

|

||||

var p ImageResizePayload

|

||||

if err := json.Unmarshal(t.Payload(), &p); err != nil {

|

||||

return fmt.Errorf("json.Unmarshal failed: %v: %w", err, asynq.SkipRetry)

|

||||

func (p *ImageProcessor) ProcessTask(ctx context.Context, t *asynq.Task) error {

|

||||

src, err := t.Payload.GetString("src")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

log.Printf("Resizing image: src=%s", p.SourceURL)

|

||||

// Image resizing code ...

|

||||

dst, err := t.Payload.GetString("dst")

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

fmt.Printf("Process image: src = %s, dst = %s\n", src, dst)

|

||||

// Image processing logic ...

|

||||

return nil

|

||||

}

|

||||

|

||||

func NewImageProcessor() *ImageProcessor {

|

||||

return &ImageProcessor{}

|

||||

// ... return an instance

|

||||

}

|

||||

```

|

||||

|

||||

In your application code, import the above package and use [`Client`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Client) to put tasks on queues.

|

||||

In your web application code, import the above package and use [`Client`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Client) to put tasks on the queue.

|

||||

A task will be processed asynchronously by a background worker as soon as the task gets enqueued.

|

||||

Scheduled tasks will be stored in Redis and will be enqueued at the specified time.

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"log"

|

||||

"time"

|

||||

|

||||

"github.com/hibiken/asynq"

|

||||

@ -171,57 +147,64 @@ import (

|

||||

const redisAddr = "127.0.0.1:6379"

|

||||

|

||||

func main() {

|

||||

client := asynq.NewClient(asynq.RedisClientOpt{Addr: redisAddr})

|

||||

defer client.Close()

|

||||

r := asynq.RedisClientOpt{Addr: redisAddr}

|

||||

c := asynq.NewClient(r)

|

||||

defer c.Close()

|

||||

|

||||

// ------------------------------------------------------

|

||||

// Example 1: Enqueue task to be processed immediately.

|

||||

// Use (*Client).Enqueue method.

|

||||

// ------------------------------------------------------

|

||||

|

||||

task, err := tasks.NewEmailDeliveryTask(42, "some:template:id")

|

||||

t := tasks.NewEmailDeliveryTask(42, "some:template:id")

|

||||

err := c.Enqueue(t)

|

||||

if err != nil {

|

||||

log.Fatalf("could not create task: %v", err)

|

||||

log.Fatal("could not enqueue task: %v", err)

|

||||

}

|

||||

info, err := client.Enqueue(task)

|

||||

if err != nil {

|

||||

log.Fatalf("could not enqueue task: %v", err)

|

||||

}

|

||||

log.Printf("enqueued task: id=%s queue=%s", info.ID, info.Queue)

|

||||

|

||||

|

||||

// ------------------------------------------------------------

|

||||

// Example 2: Schedule task to be processed in the future.

|

||||

// Use ProcessIn or ProcessAt option.

|

||||

// Use (*Client).EnqueueIn or (*Client).EnqueueAt.

|

||||

// ------------------------------------------------------------

|

||||

|

||||

info, err = client.Enqueue(task, asynq.ProcessIn(24*time.Hour))

|

||||

t = tasks.NewEmailDeliveryTask(42, "other:template:id")

|

||||

err = c.EnqueueIn(24*time.Hour, t)

|

||||

if err != nil {

|

||||

log.Fatalf("could not schedule task: %v", err)

|

||||

log.Fatal("could not schedule task: %v", err)

|

||||

}

|

||||

log.Printf("enqueued task: id=%s queue=%s", info.ID, info.Queue)

|

||||

|

||||

|

||||

// ----------------------------------------------------------------------------

|

||||

// Example 3: Set other options to tune task processing behavior.

|

||||

// Example 3: Set options to tune task processing behavior.

|

||||

// Options include MaxRetry, Queue, Timeout, Deadline, Unique etc.

|

||||

// ----------------------------------------------------------------------------

|

||||

|

||||

task, err = tasks.NewImageResizeTask("https://example.com/myassets/image.jpg")

|

||||

c.SetDefaultOptions(tasks.ImageProcessing, asynq.MaxRetry(10), asynq.Timeout(time.Minute))

|

||||

|

||||

t = tasks.NewImageProcessingTask("some/blobstore/url", "other/blobstore/url")

|

||||

err = c.Enqueue(t)

|

||||

if err != nil {

|

||||

log.Fatalf("could not create task: %v", err)

|

||||

log.Fatal("could not enqueue task: %v", err)

|

||||

}

|

||||

info, err = client.Enqueue(task, asynq.MaxRetry(10), asynq.Timeout(3 * time.Minute))

|

||||

|

||||

// ---------------------------------------------------------------------------

|

||||

// Example 4: Pass options to tune task processing behavior at enqueue time.

|

||||

// Options passed at enqueue time override default ones, if any.

|

||||

// ---------------------------------------------------------------------------

|

||||

|

||||

t = tasks.NewImageProcessingTask("some/blobstore/url", "other/blobstore/url")

|

||||

err = c.Enqueue(t, asynq.Queue("critical"), asynq.Timeout(30*time.Second))

|

||||

if err != nil {

|

||||

log.Fatalf("could not enqueue task: %v", err)

|

||||

log.Fatal("could not enqueue task: %v", err)

|

||||

}

|

||||

log.Printf("enqueued task: id=%s queue=%s", info.ID, info.Queue)

|

||||

}

|

||||

```

|

||||

|

||||

Next, start a worker server to process these tasks in the background. To start the background workers, use [`Server`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Server) and provide your [`Handler`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Handler) to process the tasks.

|

||||

Next, create a worker server to process these tasks in the background.

|

||||

To start the background workers, use [`Server`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Server) and provide your [`Handler`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Handler) to process the tasks.

|

||||

|

||||

You can optionally use [`ServeMux`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#ServeMux) to create a handler, just as you would with [`net/http`](https://golang.org/pkg/net/http/) Handler.

|

||||

You can optionally use [`ServeMux`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#ServeMux) to create a handler, just as you would with [`"net/http"`](https://golang.org/pkg/net/http/) Handler.

|

||||

|

||||

```go

|

||||

package main

|

||||

@ -236,25 +219,24 @@ import (

|

||||

const redisAddr = "127.0.0.1:6379"

|

||||

|

||||

func main() {

|

||||

srv := asynq.NewServer(

|

||||

asynq.RedisClientOpt{Addr: redisAddr},

|

||||

asynq.Config{

|

||||

// Specify how many concurrent workers to use

|

||||

Concurrency: 10,

|

||||

// Optionally specify multiple queues with different priority.

|

||||

Queues: map[string]int{

|

||||

"critical": 6,

|

||||

"default": 3,

|

||||

"low": 1,

|

||||

},

|

||||

// See the godoc for other configuration options

|

||||

r := asynq.RedisClientOpt{Addr: redisAddr}

|

||||

|

||||

srv := asynq.NewServer(r, asynq.Config{

|

||||

// Specify how many concurrent workers to use

|

||||

Concurrency: 10,

|

||||

// Optionally specify multiple queues with different priority.

|

||||

Queues: map[string]int{

|

||||

"critical": 6,

|

||||

"default": 3,

|

||||

"low": 1,

|

||||

},

|

||||

)

|

||||

// See the godoc for other configuration options

|

||||

})

|

||||

|

||||

// mux maps a type to a handler

|

||||

mux := asynq.NewServeMux()

|

||||

mux.HandleFunc(tasks.TypeEmailDelivery, tasks.HandleEmailDeliveryTask)

|

||||

mux.Handle(tasks.TypeImageResize, tasks.NewImageProcessor())

|

||||

mux.HandleFunc(tasks.EmailDelivery, tasks.HandleEmailDeliveryTask)

|

||||

mux.Handle(tasks.ImageProcessing, tasks.NewImageProcessor())

|

||||

// ...register other handlers...

|

||||

|

||||

if err := srv.Run(mux); err != nil {

|

||||

@ -263,55 +245,52 @@ func main() {

|

||||

}

|

||||

```

|

||||

|

||||

For a more detailed walk-through of the library, see our [Getting Started](https://github.com/hibiken/asynq/wiki/Getting-Started) guide.

|

||||

For a more detailed walk-through of the library, see our [Getting Started Guide](https://github.com/hibiken/asynq/wiki/Getting-Started).

|

||||

|

||||

To learn more about `asynq` features and APIs, see the package [godoc](https://godoc.org/github.com/hibiken/asynq).

|

||||

|

||||

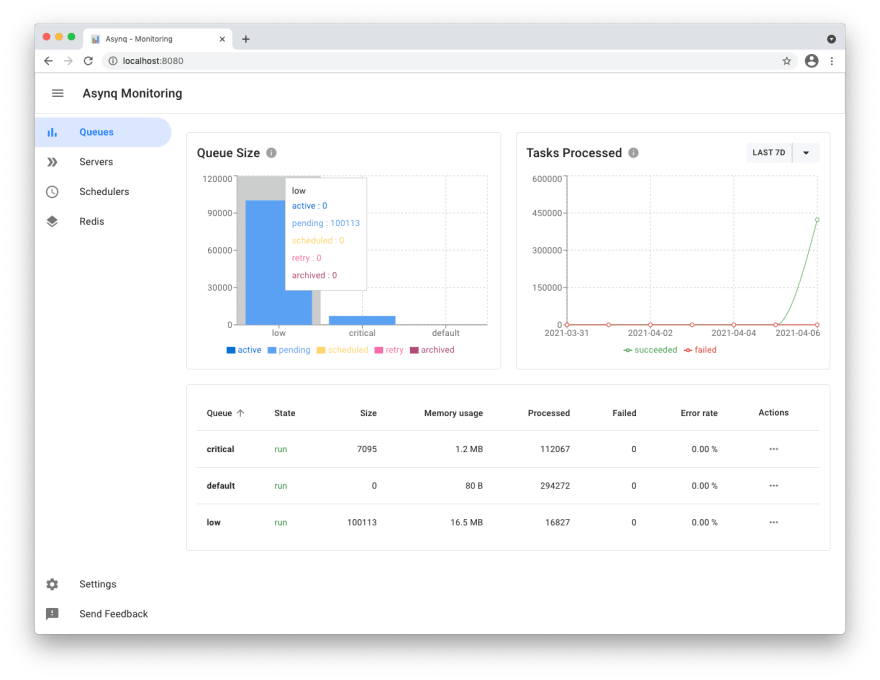

## Web UI

|

||||

|

||||

[Asynqmon](https://github.com/hibiken/asynqmon) is a web based tool for monitoring and administrating Asynq queues and tasks.

|

||||

|

||||

Here's a few screenshots of the Web UI:

|

||||

|

||||

**Queues view**

|

||||

|

||||

|

||||

|

||||

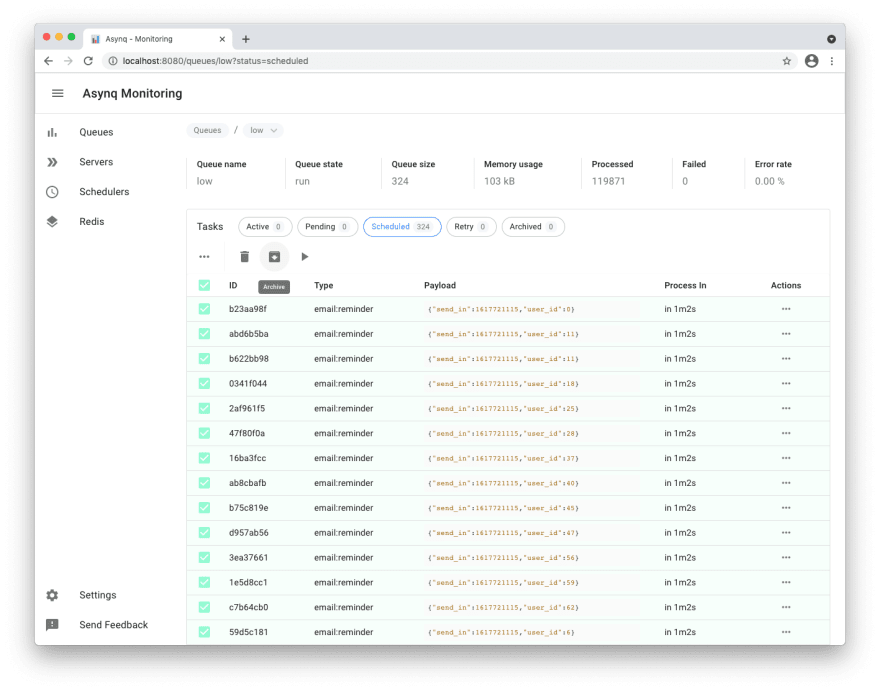

**Tasks view**

|

||||

|

||||

|

||||

|

||||

**Metrics view**

|

||||

<img width="1532" alt="Screen Shot 2021-12-19 at 4 37 19 PM" src="https://user-images.githubusercontent.com/10953044/146777420-cae6c476-bac6-469c-acce-b2f6584e8707.png">

|

||||

|

||||

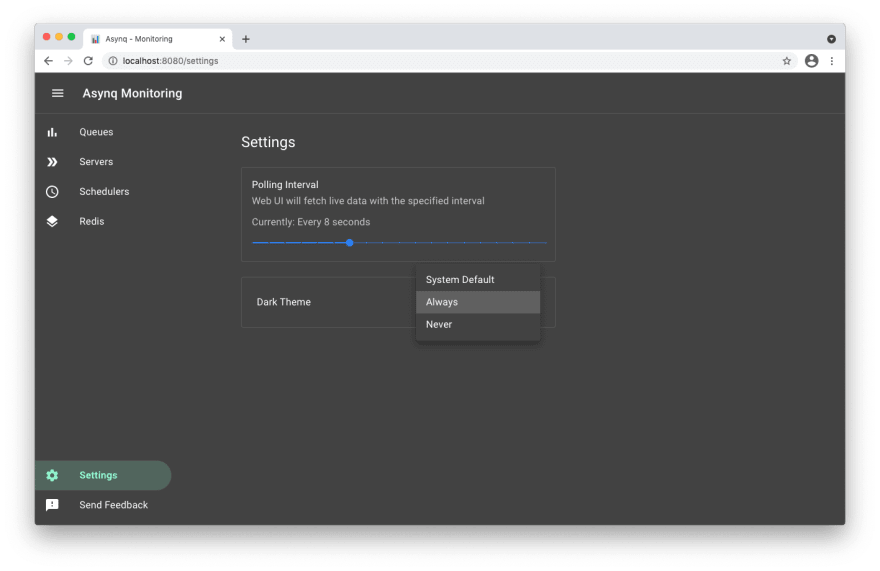

**Settings and adaptive dark mode**

|

||||

|

||||

|

||||

|

||||

For details on how to use the tool, refer to the tool's [README](https://github.com/hibiken/asynqmon#readme).

|

||||

To Learn more about `asynq` features and APIs, see our [Wiki](https://github.com/hibiken/asynq/wiki) and [godoc](https://godoc.org/github.com/hibiken/asynq).

|

||||

|

||||

## Command Line Tool

|

||||

|

||||

Asynq ships with a command line tool to inspect the state of queues and tasks.

|

||||

|

||||

To install the CLI tool, run the following command:

|

||||

Here's an example of running the `stats` command.

|

||||

|

||||

```sh

|

||||

go install github.com/hibiken/asynq/tools/asynq@latest

|

||||

```

|

||||

|

||||

Here's an example of running the `asynq dash` command:

|

||||

|

||||

|

||||

|

||||

|

||||

For details on how to use the tool, refer to the tool's [README](/tools/asynq/README.md).

|

||||

|

||||

## Installation

|

||||

|

||||

To install `asynq` library, run the following command:

|

||||

|

||||

```sh

|

||||

go get -u github.com/hibiken/asynq

|

||||

```

|

||||

|

||||

To install the CLI tool, run the following command:

|

||||

|

||||

```sh

|

||||

go get -u github.com/hibiken/asynq/tools/asynq

|

||||

```

|

||||

|

||||

## Requirements

|

||||

|

||||

| Dependency | Version |

|

||||

| -------------------------- | ------- |

|

||||

| [Redis](https://redis.io/) | v2.8+ |

|

||||

| [Go](https://golang.org/) | v1.13+ |

|

||||

|

||||

## Contributing

|

||||

|

||||

We are open to, and grateful for, any contributions (GitHub issues/PRs, feedback on [Gitter channel](https://gitter.im/go-asynq/community), etc) made by the community.

|

||||

|

||||

We are open to, and grateful for, any contributions (Github issues/pull-requests, feedback on Gitter channel, etc) made by the community.

|

||||

Please see the [Contribution Guide](/CONTRIBUTING.md) before contributing.

|

||||

|

||||

## Acknowledgements

|

||||

|

||||

- [Sidekiq](https://github.com/mperham/sidekiq) : Many of the design ideas are taken from sidekiq and its Web UI

|

||||

- [RQ](https://github.com/rq/rq) : Client APIs are inspired by rq library.

|

||||

- [Cobra](https://github.com/spf13/cobra) : Asynq CLI is built with cobra

|

||||

|

||||

## License

|

||||

|

||||