mirror of

https://github.com/hibiken/asynq.git

synced 2025-10-20 09:16:12 +08:00

Compare commits

145 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

d02b722d8a | ||

|

|

99c7ebeef2 | ||

|

|

bf54621196 | ||

|

|

27baf6de0d | ||

|

|

1bd0bee1e5 | ||

|

|

a9feec5967 | ||

|

|

e01c6379c8 | ||

|

|

a0df047f71 | ||

|

|

68dd6d9a9d | ||

|

|

6cce31a134 | ||

|

|

f9d7af3def | ||

|

|

b0321fb465 | ||

|

|

7776c7ae53 | ||

|

|

709ca79a2b | ||

|

|

08d8f0b37c | ||

|

|

385323b679 | ||

|

|

77604af265 | ||

|

|

4765742e8a | ||

|

|

68839dc9d3 | ||

|

|

8922d2423a | ||

|

|

b358de907e | ||

|

|

8ee1825e67 | ||

|

|

c8bda26bed | ||

|

|

8aeeb61c9d | ||

|

|

96c51fdc23 | ||

|

|

ea9086fd8b | ||

|

|

e63d51da0c | ||

|

|

cd351d49b9 | ||

|

|

87264b66f3 | ||

|

|

62168b8d0d | ||

|

|

840f7245b1 | ||

|

|

12f4c7cf6e | ||

|

|

0ec3b55e6b | ||

|

|

4bcc5ab6aa | ||

|

|

456edb6b71 | ||

|

|

b835090ad8 | ||

|

|

09cbea66f6 | ||

|

|

b9c2572203 | ||

|

|

0bf767cf21 | ||

|

|

1812d05d21 | ||

|

|

4af65d5fa5 | ||

|

|

a19ad19382 | ||

|

|

8117ce8972 | ||

|

|

d98ecdebb4 | ||

|

|

ffe9aa74b3 | ||

|

|

d2d4029aba | ||

|

|

76bd865ebc | ||

|

|

136d1c9ea9 | ||

|

|

52e04355d3 | ||

|

|

cde3e57c6c | ||

|

|

dd66acef1b | ||

|

|

30a3d9641a | ||

|

|

961582cba6 | ||

|

|

430dbb298e | ||

|

|

675826be5f | ||

|

|

62f4e46b73 | ||

|

|

a500f8a534 | ||

|

|

bcfeff38ed | ||

|

|

12a90f6a8d | ||

|

|

807624e7dd | ||

|

|

4d65024bd7 | ||

|

|

76486b5cb4 | ||

|

|

1db516c53c | ||

|

|

cb5bdf245c | ||

|

|

267493ccef | ||

|

|

5d7f1b6a80 | ||

|

|

77ded502ab | ||

|

|

f2284be43d | ||

|

|

3cadab55cb | ||

|

|

298a420f9f | ||

|

|

b1d717c842 | ||

|

|

56e5762eea | ||

|

|

5ec41e388b | ||

|

|

9c95c41651 | ||

|

|

476812475e | ||

|

|

7af3981929 | ||

|

|

2516c4baba | ||

|

|

ebe482a65c | ||

|

|

3e9fc2f972 | ||

|

|

63ce9ed0f9 | ||

|

|

32d3f329b9 | ||

|

|

544c301a8b | ||

|

|

8b997d2fab | ||

|

|

901105a8d7 | ||

|

|

aaa3f1d4fd | ||

|

|

4722ca2d3d | ||

|

|

6a9d9fd717 | ||

|

|

de28c1ea19 | ||

|

|

f618f5b1f5 | ||

|

|

aa936466b3 | ||

|

|

5d1ec70544 | ||

|

|

d1d3be9b00 | ||

|

|

bc77f6fe14 | ||

|

|

efe197a47b | ||

|

|

97b5516183 | ||

|

|

8eafa03ca7 | ||

|

|

430b01c9aa | ||

|

|

14c381dc40 | ||

|

|

e13122723a | ||

|

|

eba7c4e085 | ||

|

|

bfde0b6283 | ||

|

|

afde6a7266 | ||

|

|

6529a1e0b1 | ||

|

|

c9a6ab8ae1 | ||

|

|

557c1a5044 | ||

|

|

0236eb9a1c | ||

|

|

3c2b2cf4a3 | ||

|

|

04df71198d | ||

|

|

2884044e75 | ||

|

|

3719fad396 | ||

|

|

42c7ac0746 | ||

|

|

d331ff055d | ||

|

|

ccb682853e | ||

|

|

7c3ad9e45c | ||

|

|

ea23db4f6b | ||

|

|

00a25ca570 | ||

|

|

7235041128 | ||

|

|

a150d18ed7 | ||

|

|

0712e90f23 | ||

|

|

c5100a9c23 | ||

|

|

196d66f221 | ||

|

|

38509e309f | ||

|

|

f4dd8fe962 | ||

|

|

c06e9de97d | ||

|

|

52d536a8f5 | ||

|

|

f9c0673116 | ||

|

|

b604d25937 | ||

|

|

dfdf530a24 | ||

|

|

e9239260ae | ||

|

|

8f9d5a3352 | ||

|

|

c4dc993241 | ||

|

|

37dfd746d4 | ||

|

|

8d6e4167ab | ||

|

|

476862dd7b | ||

|

|

dcd873fa2a | ||

|

|

2604bb2192 | ||

|

|

942345ee80 | ||

|

|

1f059eeee1 | ||

|

|

4ae73abdaa | ||

|

|

96b2318300 | ||

|

|

8312515e64 | ||

|

|

50e7f38365 | ||

|

|

fadcae76d6 | ||

|

|

a2d4ead989 | ||

|

|

82b6828f43 |

82

.github/workflows/benchstat.yml

vendored

Normal file

82

.github/workflows/benchstat.yml

vendored

Normal file

@@ -0,0 +1,82 @@

|

|||||||

|

# This workflow runs benchmarks against the current branch,

|

||||||

|

# compares them to benchmarks against master,

|

||||||

|

# and uploads the results as an artifact.

|

||||||

|

|

||||||

|

name: benchstat

|

||||||

|

|

||||||

|

on: [pull_request]

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

incoming:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

services:

|

||||||

|

redis:

|

||||||

|

image: redis

|

||||||

|

ports:

|

||||||

|

- 6379:6379

|

||||||

|

steps:

|

||||||

|

- name: Checkout

|

||||||

|

uses: actions/checkout@v2

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v2

|

||||||

|

with:

|

||||||

|

go-version: 1.16.x

|

||||||

|

- name: Benchmark

|

||||||

|

run: go test -run=^$ -bench=. -count=5 -timeout=60m ./... | tee -a new.txt

|

||||||

|

- name: Upload Benchmark

|

||||||

|

uses: actions/upload-artifact@v2

|

||||||

|

with:

|

||||||

|

name: bench-incoming

|

||||||

|

path: new.txt

|

||||||

|

|

||||||

|

current:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

services:

|

||||||

|

redis:

|

||||||

|

image: redis

|

||||||

|

ports:

|

||||||

|

- 6379:6379

|

||||||

|

steps:

|

||||||

|

- name: Checkout

|

||||||

|

uses: actions/checkout@v2

|

||||||

|

with:

|

||||||

|

ref: master

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v2

|

||||||

|

with:

|

||||||

|

go-version: 1.15.x

|

||||||

|

- name: Benchmark

|

||||||

|

run: go test -run=^$ -bench=. -count=5 -timeout=60m ./... | tee -a old.txt

|

||||||

|

- name: Upload Benchmark

|

||||||

|

uses: actions/upload-artifact@v2

|

||||||

|

with:

|

||||||

|

name: bench-current

|

||||||

|

path: old.txt

|

||||||

|

|

||||||

|

benchstat:

|

||||||

|

needs: [incoming, current]

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

steps:

|

||||||

|

- name: Checkout

|

||||||

|

uses: actions/checkout@v2

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v2

|

||||||

|

with:

|

||||||

|

go-version: 1.15.x

|

||||||

|

- name: Install benchstat

|

||||||

|

run: go get -u golang.org/x/perf/cmd/benchstat

|

||||||

|

- name: Download Incoming

|

||||||

|

uses: actions/download-artifact@v2

|

||||||

|

with:

|

||||||

|

name: bench-incoming

|

||||||

|

- name: Download Current

|

||||||

|

uses: actions/download-artifact@v2

|

||||||

|

with:

|

||||||

|

name: bench-current

|

||||||

|

- name: Benchstat Results

|

||||||

|

run: benchstat old.txt new.txt | tee -a benchstat.txt

|

||||||

|

- name: Upload benchstat results

|

||||||

|

uses: actions/upload-artifact@v2

|

||||||

|

with:

|

||||||

|

name: benchstat

|

||||||

|

path: benchstat.txt

|

||||||

35

.github/workflows/build.yml

vendored

Normal file

35

.github/workflows/build.yml

vendored

Normal file

@@ -0,0 +1,35 @@

|

|||||||

|

name: build

|

||||||

|

|

||||||

|

on: [push, pull_request]

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

build:

|

||||||

|

strategy:

|

||||||

|

matrix:

|

||||||

|

os: [ubuntu-latest]

|

||||||

|

go-version: [1.13.x, 1.14.x, 1.15.x, 1.16.x]

|

||||||

|

runs-on: ${{ matrix.os }}

|

||||||

|

services:

|

||||||

|

redis:

|

||||||

|

image: redis

|

||||||

|

ports:

|

||||||

|

- 6379:6379

|

||||||

|

steps:

|

||||||

|

- uses: actions/checkout@v2

|

||||||

|

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v2

|

||||||

|

with:

|

||||||

|

go-version: ${{ matrix.go-version }}

|

||||||

|

|

||||||

|

- name: Build

|

||||||

|

run: go build -v ./...

|

||||||

|

|

||||||

|

- name: Test

|

||||||

|

run: go test -race -v -coverprofile=coverage.txt -covermode=atomic ./...

|

||||||

|

|

||||||

|

- name: Benchmark Test

|

||||||

|

run: go test -run=^$ -bench=. -loglevel=debug ./...

|

||||||

|

|

||||||

|

- name: Upload coverage to Codecov

|

||||||

|

uses: codecov/codecov-action@v1

|

||||||

5

.gitignore

vendored

5

.gitignore

vendored

@@ -18,4 +18,7 @@

|

|||||||

/tools/asynq/asynq

|

/tools/asynq/asynq

|

||||||

|

|

||||||

# Ignore asynq config file

|

# Ignore asynq config file

|

||||||

.asynq.*

|

.asynq.*

|

||||||

|

|

||||||

|

# Ignore editor config files

|

||||||

|

.vscode

|

||||||

13

.travis.yml

13

.travis.yml

@@ -1,13 +0,0 @@

|

|||||||

language: go

|

|

||||||

go_import_path: github.com/hibiken/asynq

|

|

||||||

git:

|

|

||||||

depth: 1

|

|

||||||

go: [1.13.x, 1.14.x, 1.15.x]

|

|

||||||

script:

|

|

||||||

- go test -race -v -coverprofile=coverage.txt -covermode=atomic ./...

|

|

||||||

- go test -run=XXX -bench=. -loglevel=debug ./...

|

|

||||||

services:

|

|

||||||

- redis-server

|

|

||||||

after_success:

|

|

||||||

- bash ./.travis/benchcmp.sh

|

|

||||||

- bash <(curl -s https://codecov.io/bash)

|

|

||||||

@@ -1,18 +0,0 @@

|

|||||||

if [ "${TRAVIS_PULL_REQUEST_BRANCH:-$TRAVIS_BRANCH}" != "master" ]; then

|

|

||||||

REMOTE_URL="$(git config --get remote.origin.url)";

|

|

||||||

cd ${TRAVIS_BUILD_DIR}/.. && \

|

|

||||||

git clone ${REMOTE_URL} "${TRAVIS_REPO_SLUG}-bench" && \

|

|

||||||

cd "${TRAVIS_REPO_SLUG}-bench" && \

|

|

||||||

|

|

||||||

# Benchmark master

|

|

||||||

git checkout master && \

|

|

||||||

go test -run=XXX -bench=. ./... > master.txt && \

|

|

||||||

|

|

||||||

# Benchmark feature branch

|

|

||||||

git checkout ${TRAVIS_COMMIT} && \

|

|

||||||

go test -run=XXX -bench=. ./... > feature.txt && \

|

|

||||||

|

|

||||||

# compare two benchmarks

|

|

||||||

go get -u golang.org/x/tools/cmd/benchcmp && \

|

|

||||||

benchcmp master.txt feature.txt;

|

|

||||||

fi

|

|

||||||

146

CHANGELOG.md

146

CHANGELOG.md

@@ -7,14 +7,132 @@ and this project adheres to [Semantic Versioning](https://semver.org/spec/v2.0.0

|

|||||||

|

|

||||||

## [Unreleased]

|

## [Unreleased]

|

||||||

|

|

||||||

|

## [0.18.2] - 2021-06-29

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- NewTask function now takes array of bytes as payload.

|

||||||

|

- Task `Type` and `Payload` should be accessed by a method call.

|

||||||

|

- `Server` API has changed. Renamed `Quiet` to `Stop`. Renamed `Stop` to `Shutdown`. _Note:_ As a result of this renaming, the behavior of `Stop` has changed. Please update the exising code to call `Shutdown` where it used to call `Stop`.

|

||||||

|

- `Scheduler` API has changed. Renamed `Stop` to `Shutdown`.

|

||||||

|

- Requires redis v4.0+ for multiple field/value pair support

|

||||||

|

- `Client.Enqueue` now returns `TaskInfo`

|

||||||

|

- `Inspector.RunTaskByKey` is replaced with `Inspector.RunTask`

|

||||||

|

- `Inspector.DeleteTaskByKey` is replaced with `Inspector.DeleteTask`

|

||||||

|

- `Inspector.ArchiveTaskByKey` is replaced with `Inspector.ArchiveTask`

|

||||||

|

- `inspeq` package is removed. All types and functions from the package is moved to `asynq` package.

|

||||||

|

- `WorkerInfo` field names have changed.

|

||||||

|

- `Inspector.CancelActiveTask` is renamed to `Inspector.CancelProcessing`

|

||||||

|

|

||||||

|

## [0.17.2] - 2021-06-06

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Free unique lock when task is deleted (https://github.com/hibiken/asynq/issues/275).

|

||||||

|

|

||||||

|

## [0.17.1] - 2021-04-04

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fix bug in internal `RDB.memoryUsage` method.

|

||||||

|

|

||||||

|

## [0.17.0] - 2021-03-24

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `DialTimeout`, `ReadTimeout`, and `WriteTimeout` options are added to `RedisConnOpt`.

|

||||||

|

|

||||||

|

## [0.16.1] - 2021-03-20

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Replace `KEYS` command with `SCAN` as recommended by [redis doc](https://redis.io/commands/KEYS).

|

||||||

|

|

||||||

|

## [0.16.0] - 2021-03-10

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Unregister` method is added to `Scheduler` to remove a registered entry.

|

||||||

|

|

||||||

|

## [0.15.0] - 2021-01-31

|

||||||

|

|

||||||

|

**IMPORTATNT**: All `Inspector` related code are moved to subpackage "github.com/hibiken/asynq/inspeq"

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- `Inspector` related code are moved to subpackage "github.com/hibken/asynq/inspeq".

|

||||||

|

- `RedisConnOpt` interface has changed slightly. If you have been passing `RedisClientOpt`, `RedisFailoverClientOpt`, or `RedisClusterClientOpt` as a pointer,

|

||||||

|

update your code to pass as a value.

|

||||||

|

- `ErrorMsg` field in `RetryTask` and `ArchivedTask` was renamed to `LastError`.

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `MaxRetry`, `Retried`, `LastError` fields were added to all task types returned from `Inspector`.

|

||||||

|

- `MemoryUsage` field was added to `QueueStats`.

|

||||||

|

- `DeleteAllPendingTasks`, `ArchiveAllPendingTasks` were added to `Inspector`

|

||||||

|

- `DeleteTaskByKey` and `ArchiveTaskByKey` now supports deleting/archiving `PendingTask`.

|

||||||

|

- asynq CLI now supports deleting/archiving pending tasks.

|

||||||

|

|

||||||

|

## [0.14.1] - 2021-01-19

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- `go.mod` file for CLI

|

||||||

|

|

||||||

|

## [0.14.0] - 2021-01-14

|

||||||

|

|

||||||

|

**IMPORTATNT**: Please run `asynq migrate` command to migrate from the previous versions.

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- Renamed `DeadTask` to `ArchivedTask`.

|

||||||

|

- Renamed the operation `Kill` to `Archive` in `Inpsector`.

|

||||||

|

- Print stack trace when Handler panics.

|

||||||

|

- Include a file name and a line number in the error message when recovering from a panic.

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `DefaultRetryDelayFunc` is now a public API, which can be used in the custom `RetryDelayFunc`.

|

||||||

|

- `SkipRetry` error is added to be used as a return value from `Handler`.

|

||||||

|

- `Servers` method is added to `Inspector`

|

||||||

|

- `CancelActiveTask` method is added to `Inspector`.

|

||||||

|

- `ListSchedulerEnqueueEvents` method is added to `Inspector`.

|

||||||

|

- `SchedulerEntries` method is added to `Inspector`.

|

||||||

|

- `DeleteQueue` method is added to `Inspector`.

|

||||||

|

|

||||||

|

## [0.13.1] - 2020-11-22

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fixed processor to wait for specified time duration before forcefully shutdown workers.

|

||||||

|

|

||||||

|

## [0.13.0] - 2020-10-13

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Scheduler` type is added to enable periodic tasks. See the godoc for its APIs and [wiki](https://github.com/hibiken/asynq/wiki/Periodic-Tasks) for the getting-started guide.

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- interface `Option` has changed. See the godoc for the new interface.

|

||||||

|

This change would have no impact as long as you are using exported functions (e.g. `MaxRetry`, `Queue`, etc)

|

||||||

|

to create `Option`s.

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Payload.String() string` method is added

|

||||||

|

- `Payload.MarshalJSON() ([]byte, error)` method is added

|

||||||

|

|

||||||

## [0.12.0] - 2020-09-12

|

## [0.12.0] - 2020-09-12

|

||||||

|

|

||||||

**IMPORTANT**: If you are upgrading from a previous version, please install the latest version of the CLI `go get -u github.com/hibiken/asynq/tools/asynq` and run `asynq migrate` command. No process should be writing to Redis while you run the migration command.

|

**IMPORTANT**: If you are upgrading from a previous version, please install the latest version of the CLI `go get -u github.com/hibiken/asynq/tools/asynq` and run `asynq migrate` command. No process should be writing to Redis while you run the migration command.

|

||||||

|

|

||||||

## The semantics of queue have changed

|

## The semantics of queue have changed

|

||||||

Previously, we called tasks that are ready to be processed *"Enqueued tasks"*, and other tasks that are scheduled to be processed in the future *"Scheduled tasks"*, etc.

|

|

||||||

We changed the semantics of *"Enqueue"* slightly; All tasks that client pushes to Redis are *Enqueued* to a queue. Within a queue, tasks will transition from one state to another.

|

Previously, we called tasks that are ready to be processed _"Enqueued tasks"_, and other tasks that are scheduled to be processed in the future _"Scheduled tasks"_, etc.

|

||||||

|

We changed the semantics of _"Enqueue"_ slightly; All tasks that client pushes to Redis are _Enqueued_ to a queue. Within a queue, tasks will transition from one state to another.

|

||||||

Possible task states are:

|

Possible task states are:

|

||||||

|

|

||||||

- `Pending`: task is ready to be processed (previously called "Enqueued")

|

- `Pending`: task is ready to be processed (previously called "Enqueued")

|

||||||

- `Active`: tasks is currently being processed (previously called "InProgress")

|

- `Active`: tasks is currently being processed (previously called "InProgress")

|

||||||

- `Scheduled`: task is scheduled to be processed in the future

|

- `Scheduled`: task is scheduled to be processed in the future

|

||||||

@@ -26,23 +144,28 @@ Possible task states are:

|

|||||||

---

|

---

|

||||||

|

|

||||||

### Changed

|

### Changed

|

||||||

|

|

||||||

#### `Client`

|

#### `Client`

|

||||||

|

|

||||||

Use `ProcessIn` or `ProcessAt` option to schedule a task instead of `EnqueueIn` or `EnqueueAt`.

|

Use `ProcessIn` or `ProcessAt` option to schedule a task instead of `EnqueueIn` or `EnqueueAt`.

|

||||||

|

|

||||||

| Previously | v0.12.0 |

|

| Previously | v0.12.0 |

|

||||||

|-----------------------------|--------------------------------------------|

|

| --------------------------- | ------------------------------------------ |

|

||||||

| `client.EnqueueAt(t, task)` | `client.Enqueue(task, asynq.ProcessAt(t))` |

|

| `client.EnqueueAt(t, task)` | `client.Enqueue(task, asynq.ProcessAt(t))` |

|

||||||

| `client.EnqueueIn(d, task)` | `client.Enqueue(task, asynq.ProcessIn(d))` |

|

| `client.EnqueueIn(d, task)` | `client.Enqueue(task, asynq.ProcessIn(d))` |

|

||||||

|

|

||||||

#### `Inspector`

|

#### `Inspector`

|

||||||

|

|

||||||

All Inspector methods are scoped to a queue, and the methods take `qname (string)` as the first argument.

|

All Inspector methods are scoped to a queue, and the methods take `qname (string)` as the first argument.

|

||||||

`EnqueuedTask` is renamed to `PendingTask` and its corresponding methods.

|

`EnqueuedTask` is renamed to `PendingTask` and its corresponding methods.

|

||||||

`InProgressTask` is renamed to `ActiveTask` and its corresponding methods.

|

`InProgressTask` is renamed to `ActiveTask` and its corresponding methods.

|

||||||

Command "Enqueue" is replaced by the verb "Run" (e.g. `EnqueueAllScheduledTasks` --> `RunAllScheduledTasks`)

|

Command "Enqueue" is replaced by the verb "Run" (e.g. `EnqueueAllScheduledTasks` --> `RunAllScheduledTasks`)

|

||||||

|

|

||||||

#### `CLI`

|

#### `CLI`

|

||||||

|

|

||||||

CLI commands are restructured to use subcommands. Commands are organized into a few management commands:

|

CLI commands are restructured to use subcommands. Commands are organized into a few management commands:

|

||||||

To view details on any command, use `asynq help <command> <subcommand>`.

|

To view details on any command, use `asynq help <command> <subcommand>`.

|

||||||

|

|

||||||

- `asynq stats`

|

- `asynq stats`

|

||||||

- `asynq queue [ls inspect history rm pause unpause]`

|

- `asynq queue [ls inspect history rm pause unpause]`

|

||||||

- `asynq task [ls cancel delete kill run delete-all kill-all run-all]`

|

- `asynq task [ls cancel delete kill run delete-all kill-all run-all]`

|

||||||

@@ -51,19 +174,23 @@ To view details on any command, use `asynq help <command> <subcommand>`.

|

|||||||

### Added

|

### Added

|

||||||

|

|

||||||

#### `RedisConnOpt`

|

#### `RedisConnOpt`

|

||||||

|

|

||||||

- `RedisClusterClientOpt` is added to connect to Redis Cluster.

|

- `RedisClusterClientOpt` is added to connect to Redis Cluster.

|

||||||

- `Username` field is added to all `RedisConnOpt` types in order to authenticate connection when Redis ACLs are used.

|

- `Username` field is added to all `RedisConnOpt` types in order to authenticate connection when Redis ACLs are used.

|

||||||

|

|

||||||

#### `Client`

|

#### `Client`

|

||||||

|

|

||||||

- `ProcessIn(d time.Duration) Option` and `ProcessAt(t time.Time) Option` are added to replace `EnqueueIn` and `EnqueueAt` functionality.

|

- `ProcessIn(d time.Duration) Option` and `ProcessAt(t time.Time) Option` are added to replace `EnqueueIn` and `EnqueueAt` functionality.

|

||||||

|

|

||||||

#### `Inspector`

|

#### `Inspector`

|

||||||

|

|

||||||

- `Queues() ([]string, error)` method is added to get all queue names.

|

- `Queues() ([]string, error)` method is added to get all queue names.

|

||||||

- `ClusterKeySlot(qname string) (int64, error)` method is added to get queue's hash slot in Redis cluster.

|

- `ClusterKeySlot(qname string) (int64, error)` method is added to get queue's hash slot in Redis cluster.

|

||||||

- `ClusterNodes(qname string) ([]ClusterNode, error)` method is added to get a list of Redis cluster nodes for the given queue.

|

- `ClusterNodes(qname string) ([]ClusterNode, error)` method is added to get a list of Redis cluster nodes for the given queue.

|

||||||

- `Close() error` method is added to close connection with redis.

|

- `Close() error` method is added to close connection with redis.

|

||||||

|

|

||||||

### `Handler`

|

### `Handler`

|

||||||

|

|

||||||

- `GetQueueName(ctx context.Context) (string, bool)` helper is added to extract queue name from a context.

|

- `GetQueueName(ctx context.Context) (string, bool)` helper is added to extract queue name from a context.

|

||||||

|

|

||||||

## [0.11.0] - 2020-07-28

|

## [0.11.0] - 2020-07-28

|

||||||

@@ -80,7 +207,7 @@ To view details on any command, use `asynq help <command> <subcommand>`.

|

|||||||

|

|

||||||

- All tasks now requires timeout or deadline. By default, timeout is set to 30 mins.

|

- All tasks now requires timeout or deadline. By default, timeout is set to 30 mins.

|

||||||

- Tasks that exceed its deadline are automatically retried.

|

- Tasks that exceed its deadline are automatically retried.

|

||||||

- Encoding schema for task message has changed. Please install the latest CLI and run `migrate` command if

|

- Encoding schema for task message has changed. Please install the latest CLI and run `migrate` command if

|

||||||

you have tasks enqueued with the previous version of asynq.

|

you have tasks enqueued with the previous version of asynq.

|

||||||

- API of `(*Client).Enqueue`, `(*Client).EnqueueIn`, and `(*Client).EnqueueAt` has changed to return a `*Result`.

|

- API of `(*Client).Enqueue`, `(*Client).EnqueueIn`, and `(*Client).EnqueueAt` has changed to return a `*Result`.

|

||||||

- API of `ErrorHandler` has changed. It now takes context as the first argument and removed `retried`, `maxRetry` from the argument list.

|

- API of `ErrorHandler` has changed. It now takes context as the first argument and removed `retried`, `maxRetry` from the argument list.

|

||||||

@@ -98,7 +225,6 @@ To view details on any command, use `asynq help <command> <subcommand>`.

|

|||||||

|

|

||||||

- Fixes the JSON number overflow issue (https://github.com/hibiken/asynq/issues/166).

|

- Fixes the JSON number overflow issue (https://github.com/hibiken/asynq/issues/166).

|

||||||

|

|

||||||

|

|

||||||

## [0.9.2] - 2020-06-08

|

## [0.9.2] - 2020-06-08

|

||||||

|

|

||||||

### Added

|

### Added

|

||||||

|

|||||||

7

Makefile

Normal file

7

Makefile

Normal file

@@ -0,0 +1,7 @@

|

|||||||

|

ROOT_DIR:=$(shell dirname $(realpath $(firstword $(MAKEFILE_LIST))))

|

||||||

|

|

||||||

|

proto: internal/proto/asynq.proto

|

||||||

|

protoc -I=$(ROOT_DIR)/internal/proto \

|

||||||

|

--go_out=$(ROOT_DIR)/internal/proto \

|

||||||

|

--go_opt=module=github.com/hibiken/asynq/internal/proto \

|

||||||

|

$(ROOT_DIR)/internal/proto/asynq.proto

|

||||||

228

README.md

228

README.md

@@ -1,32 +1,26 @@

|

|||||||

# Asynq

|

<img src="https://user-images.githubusercontent.com/11155743/114697792-ffbfa580-9d26-11eb-8e5b-33bef69476dc.png" alt="Asynq logo" width="360px" />

|

||||||

|

|

||||||

|

# Simple, reliable & efficient distributed task queue in Go

|

||||||

|

|

||||||

[](https://travis-ci.com/hibiken/asynq)

|

|

||||||

[](https://opensource.org/licenses/MIT)

|

|

||||||

[](https://goreportcard.com/report/github.com/hibiken/asynq)

|

|

||||||

[](https://godoc.org/github.com/hibiken/asynq)

|

[](https://godoc.org/github.com/hibiken/asynq)

|

||||||

|

[](https://goreportcard.com/report/github.com/hibiken/asynq)

|

||||||

|

|

||||||

|

[](https://opensource.org/licenses/MIT)

|

||||||

[](https://gitter.im/go-asynq/community)

|

[](https://gitter.im/go-asynq/community)

|

||||||

[](https://codecov.io/gh/hibiken/asynq)

|

|

||||||

|

|

||||||

## Overview

|

Asynq is a Go library for queueing tasks and processing them asynchronously with workers. It's backed by [Redis](https://redis.io/) and is designed to be scalable yet easy to get started.

|

||||||

|

|

||||||

Asynq is a Go library for queueing tasks and processing them asynchronously with workers. It's backed by Redis and is designed to be scalable yet easy to get started.

|

|

||||||

|

|

||||||

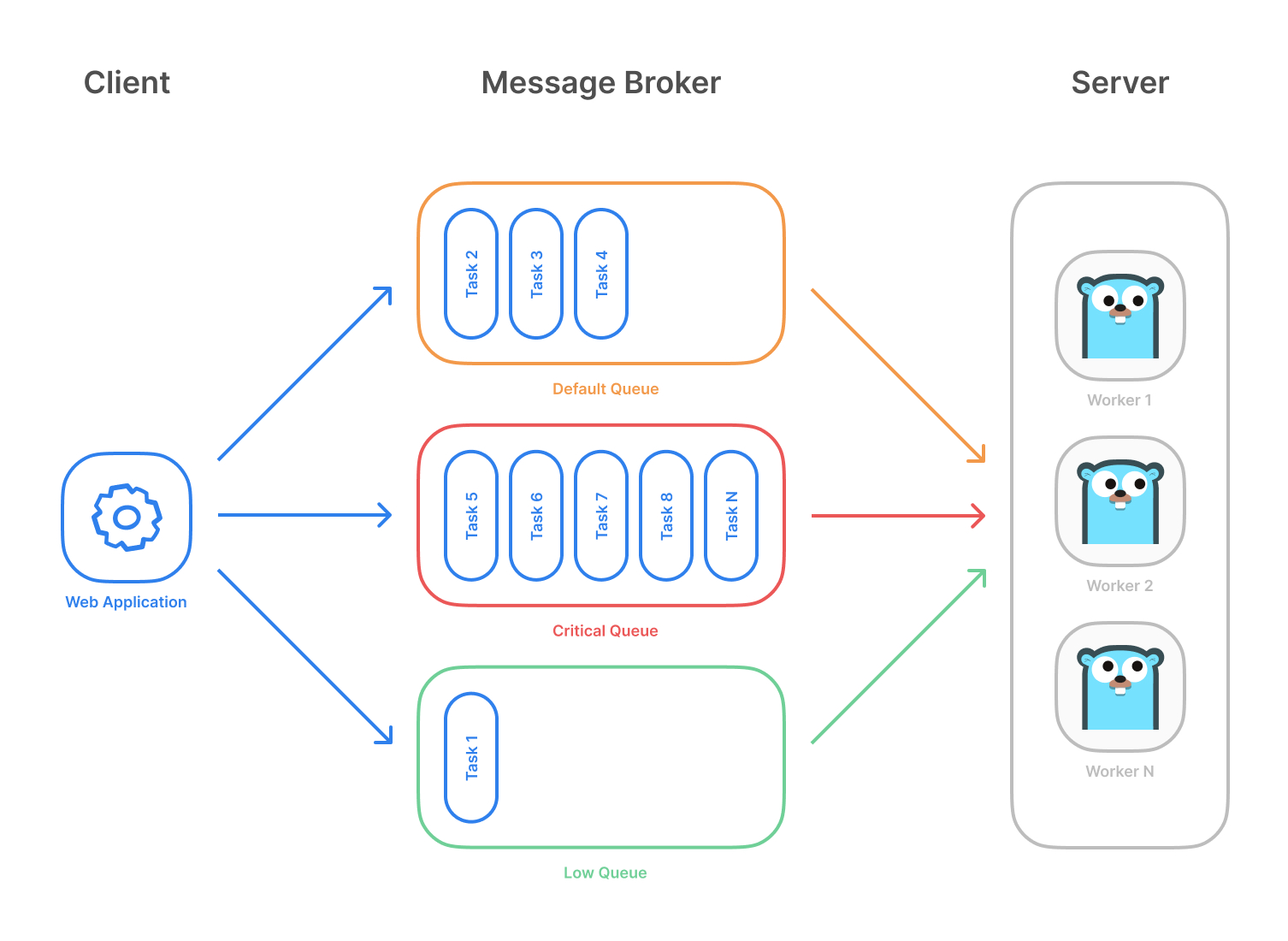

Highlevel overview of how Asynq works:

|

Highlevel overview of how Asynq works:

|

||||||

|

|

||||||

- Client puts task on a queue

|

- Client puts tasks on a queue

|

||||||

- Server pulls task off queues and starts a worker goroutine for each task

|

- Server pulls tasks off queues and starts a worker goroutine for each task

|

||||||

- Tasks are processed concurrently by multiple workers

|

- Tasks are processed concurrently by multiple workers

|

||||||

|

|

||||||

Task queues are used as a mechanism to distribute work across multiple machines.

|

Task queues are used as a mechanism to distribute work across multiple machines. A system can consist of multiple worker servers and brokers, giving way to high availability and horizontal scaling.

|

||||||

A system can consist of multiple worker servers and brokers, giving way to high availability and horizontal scaling.

|

|

||||||

|

|

||||||

|

**Example use case**

|

||||||

|

|

||||||

## Stability and Compatibility

|

|

||||||

|

|

||||||

**Important Note**: Current major version is zero (v0.x.x) to accomodate rapid development and fast iteration while getting early feedback from users (Feedback on APIs are appreciated!). The public API could change without a major version update before v1.0.0 release.

|

|

||||||

|

|

||||||

**Status**: The library is currently undergoing heavy development with frequent, breaking API changes.

|

|

||||||

|

|

||||||

## Features

|

## Features

|

||||||

|

|

||||||

@@ -42,18 +36,30 @@ A system can consist of multiple worker servers and brokers, giving way to high

|

|||||||

- Allow [timeout and deadline per task](https://github.com/hibiken/asynq/wiki/Task-Timeout-and-Cancelation)

|

- Allow [timeout and deadline per task](https://github.com/hibiken/asynq/wiki/Task-Timeout-and-Cancelation)

|

||||||

- [Flexible handler interface with support for middlewares](https://github.com/hibiken/asynq/wiki/Handler-Deep-Dive)

|

- [Flexible handler interface with support for middlewares](https://github.com/hibiken/asynq/wiki/Handler-Deep-Dive)

|

||||||

- [Ability to pause queue](/tools/asynq/README.md#pause) to stop processing tasks from the queue

|

- [Ability to pause queue](/tools/asynq/README.md#pause) to stop processing tasks from the queue

|

||||||

|

- [Periodic Tasks](https://github.com/hibiken/asynq/wiki/Periodic-Tasks)

|

||||||

- [Support Redis Cluster](https://github.com/hibiken/asynq/wiki/Redis-Cluster) for automatic sharding and high availability

|

- [Support Redis Cluster](https://github.com/hibiken/asynq/wiki/Redis-Cluster) for automatic sharding and high availability

|

||||||

- [Support Redis Sentinels](https://github.com/hibiken/asynq/wiki/Automatic-Failover) for high availability

|

- [Support Redis Sentinels](https://github.com/hibiken/asynq/wiki/Automatic-Failover) for high availability

|

||||||

|

- [Web UI](#web-ui) to inspect and remote-control queues and tasks

|

||||||

- [CLI](#command-line-tool) to inspect and remote-control queues and tasks

|

- [CLI](#command-line-tool) to inspect and remote-control queues and tasks

|

||||||

|

|

||||||

|

## Stability and Compatibility

|

||||||

|

|

||||||

|

**Status**: The library is currently undergoing **heavy development** with frequent, breaking API changes.

|

||||||

|

|

||||||

|

> ☝️ **Important Note**: Current major version is zero (`v0.x.x`) to accomodate rapid development and fast iteration while getting early feedback from users (_feedback on APIs are appreciated!_). The public API could change without a major version update before `v1.0.0` release.

|

||||||

|

|

||||||

## Quickstart

|

## Quickstart

|

||||||

|

|

||||||

First, make sure you are running a Redis server locally.

|

Make sure you have Go installed ([download](https://golang.org/dl/)). Version `1.13` or higher is required.

|

||||||

|

|

||||||

|

Initialize your project by creating a folder and then running `go mod init github.com/your/repo` ([learn more](https://blog.golang.org/using-go-modules)) inside the folder. Then install Asynq library with the [`go get`](https://golang.org/cmd/go/#hdr-Add_dependencies_to_current_module_and_install_them) command:

|

||||||

|

|

||||||

```sh

|

```sh

|

||||||

$ redis-server

|

go get -u github.com/hibiken/asynq

|

||||||

```

|

```

|

||||||

|

|

||||||

|

Make sure you're running a Redis server locally or from a [Docker](https://hub.docker.com/_/redis) container. Version `3.0` or higher is required.

|

||||||

|

|

||||||

Next, write a package that encapsulates task creation and task handling.

|

Next, write a package that encapsulates task creation and task handling.

|

||||||

|

|

||||||

```go

|

```go

|

||||||

@@ -71,19 +77,34 @@ const (

|

|||||||

TypeImageResize = "image:resize"

|

TypeImageResize = "image:resize"

|

||||||

)

|

)

|

||||||

|

|

||||||

|

type EmailDeliveryPayload struct {

|

||||||

|

UserID int

|

||||||

|

TemplateID string

|

||||||

|

}

|

||||||

|

|

||||||

|

type ImageResizePayload struct {

|

||||||

|

SourceURL string

|

||||||

|

}

|

||||||

|

|

||||||

//----------------------------------------------

|

//----------------------------------------------

|

||||||

// Write a function NewXXXTask to create a task.

|

// Write a function NewXXXTask to create a task.

|

||||||

// A task consists of a type and a payload.

|

// A task consists of a type and a payload.

|

||||||

//----------------------------------------------

|

//----------------------------------------------

|

||||||

|

|

||||||

func NewEmailDeliveryTask(userID int, tmplID string) *asynq.Task {

|

func NewEmailDeliveryTask(userID int, tmplID string) (*asynq.Task, error) {

|

||||||

payload := map[string]interface{}{"user_id": userID, "template_id": tmplID}

|

payload, err := json.Marshal(EmailDeliveryPayload{UserID: userID, TemplateID: templID})

|

||||||

return asynq.NewTask(TypeEmailDelivery, payload)

|

if err != nil {

|

||||||

|

return nil, err

|

||||||

|

}

|

||||||

|

return asynq.NewTask(TypeEmailDelivery, payload), nil

|

||||||

}

|

}

|

||||||

|

|

||||||

func NewImageResizeTask(src string) *asynq.Task {

|

func NewImageResizeTask(src string) (*asynq.Task, error) {

|

||||||

payload := map[string]interface{}{"src": src}

|

payload, err := json.Marshal(ImageResizePayload{SourceURL: src})

|

||||||

return asynq.NewTask(TypeImageResize, payload)

|

if err != nil {

|

||||||

|

return nil, err

|

||||||

|

}

|

||||||

|

return asynq.NewTask(TypeImageResize, payload), nil

|

||||||

}

|

}

|

||||||

|

|

||||||

//---------------------------------------------------------------

|

//---------------------------------------------------------------

|

||||||

@@ -95,30 +116,26 @@ func NewImageResizeTask(src string) *asynq.Task {

|

|||||||

//---------------------------------------------------------------

|

//---------------------------------------------------------------

|

||||||

|

|

||||||

func HandleEmailDeliveryTask(ctx context.Context, t *asynq.Task) error {

|

func HandleEmailDeliveryTask(ctx context.Context, t *asynq.Task) error {

|

||||||

userID, err := t.Payload.GetInt("user_id")

|

var p EmailDeliveryPayload

|

||||||

if err != nil {

|

if err := json.Unmarshal(t.Payload(), &p); err != nil {

|

||||||

return err

|

return fmt.Errorf("json.Unmarshal failed: %v: %w", err, asynq.SkipRetry)

|

||||||

}

|

}

|

||||||

tmplID, err := t.Payload.GetString("template_id")

|

log.Printf("Sending Email to User: user_id = %d, template_id = %s\n", p.UserID, p.TemplateID)

|

||||||

if err != nil {

|

|

||||||

return err

|

|

||||||

}

|

|

||||||

fmt.Printf("Send Email to User: user_id = %d, template_id = %s\n", userID, tmplID)

|

|

||||||

// Email delivery code ...

|

// Email delivery code ...

|

||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

// ImageProcessor implements asynq.Handler interface.

|

// ImageProcessor implements asynq.Handler interface.

|

||||||

type ImageProcesser struct {

|

type ImageProcessor struct {

|

||||||

// ... fields for struct

|

// ... fields for struct

|

||||||

}

|

}

|

||||||

|

|

||||||

func (p *ImageProcessor) ProcessTask(ctx context.Context, t *asynq.Task) error {

|

func (p *ImageProcessor) ProcessTask(ctx context.Context, t *asynq.Task) error {

|

||||||

src, err := t.Payload.GetString("src")

|

var p ImageResizePayload

|

||||||

if err != nil {

|

if err := json.Unmarshal(t.Payload(), &p); err != nil {

|

||||||

return err

|

return fmt.Errorf("json.Unmarshal failed: %v: %w", err, asynq.SkipRetry)

|

||||||

}

|

}

|

||||||

fmt.Printf("Resize image: src = %s\n", src)

|

log.Printf("Resizing image: src = %s\n", p.SourceURL)

|

||||||

// Image resizing code ...

|

// Image resizing code ...

|

||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

@@ -134,6 +151,8 @@ In your application code, import the above package and use [`Client`](https://pk

|

|||||||

package main

|

package main

|

||||||

|

|

||||||

import (

|

import (

|

||||||

|

"fmt"

|

||||||

|

"log"

|

||||||

"time"

|

"time"

|

||||||

|

|

||||||

"github.com/hibiken/asynq"

|

"github.com/hibiken/asynq"

|

||||||

@@ -143,21 +162,23 @@ import (

|

|||||||

const redisAddr = "127.0.0.1:6379"

|

const redisAddr = "127.0.0.1:6379"

|

||||||

|

|

||||||

func main() {

|

func main() {

|

||||||

r := asynq.RedisClientOpt{Addr: redisAddr}

|

client := asynq.NewClient(asynq.RedisClientOpt{Addr: redisAddr})

|

||||||

c := asynq.NewClient(r)

|

defer client.Close()

|

||||||

defer c.Close()

|

|

||||||

|

|

||||||

// ------------------------------------------------------

|

// ------------------------------------------------------

|

||||||

// Example 1: Enqueue task to be processed immediately.

|

// Example 1: Enqueue task to be processed immediately.

|

||||||

// Use (*Client).Enqueue method.

|

// Use (*Client).Enqueue method.

|

||||||

// ------------------------------------------------------

|

// ------------------------------------------------------

|

||||||

|

|

||||||

t := tasks.NewEmailDeliveryTask(42, "some:template:id")

|

task, err := tasks.NewEmailDeliveryTask(42, "some:template:id")

|

||||||

res, err := c.Enqueue(t)

|

|

||||||

if err != nil {

|

if err != nil {

|

||||||

log.Fatal("could not enqueue task: %v", err)

|

log.Fatalf("could not create task: %v", err)

|

||||||

}

|

}

|

||||||

fmt.Printf("Enqueued Result: %+v\n", res)

|

info, err := client.Enqueue(task)

|

||||||

|

if err != nil {

|

||||||

|

log.Fatalf("could not enqueue task: %v", err)

|

||||||

|

}

|

||||||

|

fmt.Printf("enqueued task: id=%s queue=%s\n", info.ID, info.Queue)

|

||||||

|

|

||||||

|

|

||||||

// ------------------------------------------------------------

|

// ------------------------------------------------------------

|

||||||

@@ -165,12 +186,11 @@ func main() {

|

|||||||

// Use ProcessIn or ProcessAt option.

|

// Use ProcessIn or ProcessAt option.

|

||||||

// ------------------------------------------------------------

|

// ------------------------------------------------------------

|

||||||

|

|

||||||

t = tasks.NewEmailDeliveryTask(42, "other:template:id")

|

info, err = client.Enqueue(task, asynq.ProcessIn(24*time.Hour))

|

||||||

res, err = c.Enqueue(t, asynq.ProcessIn(24*time.Hour))

|

|

||||||

if err != nil {

|

if err != nil {

|

||||||

log.Fatal("could not schedule task: %v", err)

|

log.Fatalf("could not schedule task: %v", err)

|

||||||

}

|

}

|

||||||

fmt.Printf("Enqueued Result: %+v\n", res)

|

fmt.Printf("enqueued task: id=%s queue=%s\n", info.ID, info.Queue)

|

||||||

|

|

||||||

|

|

||||||

// ----------------------------------------------------------------------------

|

// ----------------------------------------------------------------------------

|

||||||

@@ -178,33 +198,34 @@ func main() {

|

|||||||

// Options include MaxRetry, Queue, Timeout, Deadline, Unique etc.

|

// Options include MaxRetry, Queue, Timeout, Deadline, Unique etc.

|

||||||

// ----------------------------------------------------------------------------

|

// ----------------------------------------------------------------------------

|

||||||

|

|

||||||

c.SetDefaultOptions(tasks.ImageProcessing, asynq.MaxRetry(10), asynq.Timeout(3*time.Minute))

|

client.SetDefaultOptions(tasks.TypeImageResize, asynq.MaxRetry(10), asynq.Timeout(3*time.Minute))

|

||||||

|

|

||||||

t = tasks.NewImageResizeTask("some/blobstore/path")

|

task, err = tasks.NewImageResizeTask("https://example.com/myassets/image.jpg")

|

||||||

res, err = c.Enqueue(t)

|

|

||||||

if err != nil {

|

if err != nil {

|

||||||

log.Fatal("could not enqueue task: %v", err)

|

log.Fatalf("could not create task: %v", err)

|

||||||

}

|

}

|

||||||

fmt.Printf("Enqueued Result: %+v\n", res)

|

info, err = client.Enqueue(task)

|

||||||

|

if err != nil {

|

||||||

|

log.Fatalf("could not enqueue task: %v", err)

|

||||||

|

}

|

||||||

|

fmt.Printf("enqueued task: id=%s queue=%s\n", info.ID, info.Queue)

|

||||||

|

|

||||||

// ---------------------------------------------------------------------------

|

// ---------------------------------------------------------------------------

|

||||||

// Example 4: Pass options to tune task processing behavior at enqueue time.

|

// Example 4: Pass options to tune task processing behavior at enqueue time.

|

||||||

// Options passed at enqueue time override default ones, if any.

|

// Options passed at enqueue time override default ones.

|

||||||

// ---------------------------------------------------------------------------

|

// ---------------------------------------------------------------------------

|

||||||

|

|

||||||

t = tasks.NewImageResizeTask("some/blobstore/path")

|

info, err = client.Enqueue(task, asynq.Queue("critical"), asynq.Timeout(30*time.Second))

|

||||||

res, err = c.Enqueue(t, asynq.Queue("critical"), asynq.Timeout(30*time.Second))

|

|

||||||

if err != nil {

|

if err != nil {

|

||||||

log.Fatal("could not enqueue task: %v", err)

|

log.Fatal("could not enqueue task: %v", err)

|

||||||

}

|

}

|

||||||

fmt.Printf("Enqueued Result: %+v\n", res)

|

fmt.Printf("enqueued task: id=%s queue=%s\n", info.ID, info.Queue)

|

||||||

}

|

}

|

||||||

```

|

```

|

||||||

|

|

||||||

Next, start a worker server to process these tasks in the background.

|

Next, start a worker server to process these tasks in the background. To start the background workers, use [`Server`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Server) and provide your [`Handler`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Handler) to process the tasks.

|

||||||

To start the background workers, use [`Server`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Server) and provide your [`Handler`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Handler) to process the tasks.

|

|

||||||

|

|

||||||

You can optionally use [`ServeMux`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#ServeMux) to create a handler, just as you would with [`"net/http"`](https://golang.org/pkg/net/http/) Handler.

|

You can optionally use [`ServeMux`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#ServeMux) to create a handler, just as you would with [`net/http`](https://golang.org/pkg/net/http/) Handler.

|

||||||

|

|

||||||

```go

|

```go

|

||||||

package main

|

package main

|

||||||

@@ -219,19 +240,20 @@ import (

|

|||||||

const redisAddr = "127.0.0.1:6379"

|

const redisAddr = "127.0.0.1:6379"

|

||||||

|

|

||||||

func main() {

|

func main() {

|

||||||

r := asynq.RedisClientOpt{Addr: redisAddr}

|

srv := asynq.NewServer(

|

||||||

|

asynq.RedisClientOpt{Addr: redisAddr}

|

||||||

srv := asynq.NewServer(r, asynq.Config{

|

asynq.Config{

|

||||||

// Specify how many concurrent workers to use

|

// Specify how many concurrent workers to use

|

||||||

Concurrency: 10,

|

Concurrency: 10,

|

||||||

// Optionally specify multiple queues with different priority.

|

// Optionally specify multiple queues with different priority.

|

||||||

Queues: map[string]int{

|

Queues: map[string]int{

|

||||||

"critical": 6,

|

"critical": 6,

|

||||||

"default": 3,

|

"default": 3,

|

||||||

"low": 1,

|

"low": 1,

|

||||||

|

},

|

||||||

|

// See the godoc for other configuration options

|

||||||

},

|

},

|

||||||

// See the godoc for other configuration options

|

)

|

||||||

})

|

|

||||||

|

|

||||||

// mux maps a type to a handler

|

// mux maps a type to a handler

|

||||||

mux := asynq.NewServeMux()

|

mux := asynq.NewServeMux()

|

||||||

@@ -245,52 +267,52 @@ func main() {

|

|||||||

}

|

}

|

||||||

```

|

```

|

||||||

|

|

||||||

For a more detailed walk-through of the library, see our [Getting Started Guide](https://github.com/hibiken/asynq/wiki/Getting-Started).

|

For a more detailed walk-through of the library, see our [Getting Started](https://github.com/hibiken/asynq/wiki/Getting-Started) guide.

|

||||||

|

|

||||||

To Learn more about `asynq` features and APIs, see our [Wiki](https://github.com/hibiken/asynq/wiki) and [godoc](https://godoc.org/github.com/hibiken/asynq).

|

To learn more about `asynq` features and APIs, see the package [godoc](https://godoc.org/github.com/hibiken/asynq).

|

||||||

|

|

||||||

|

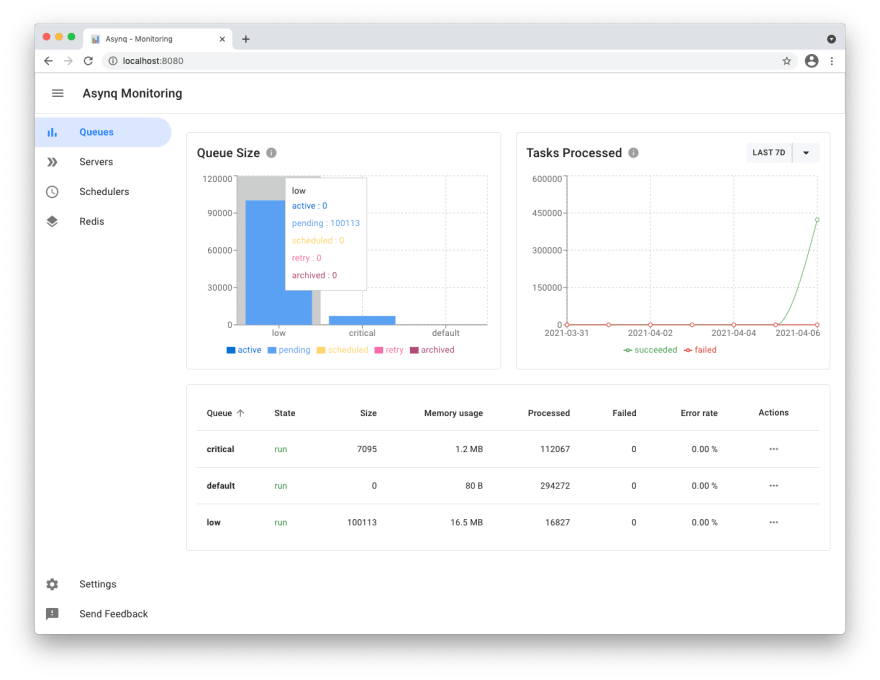

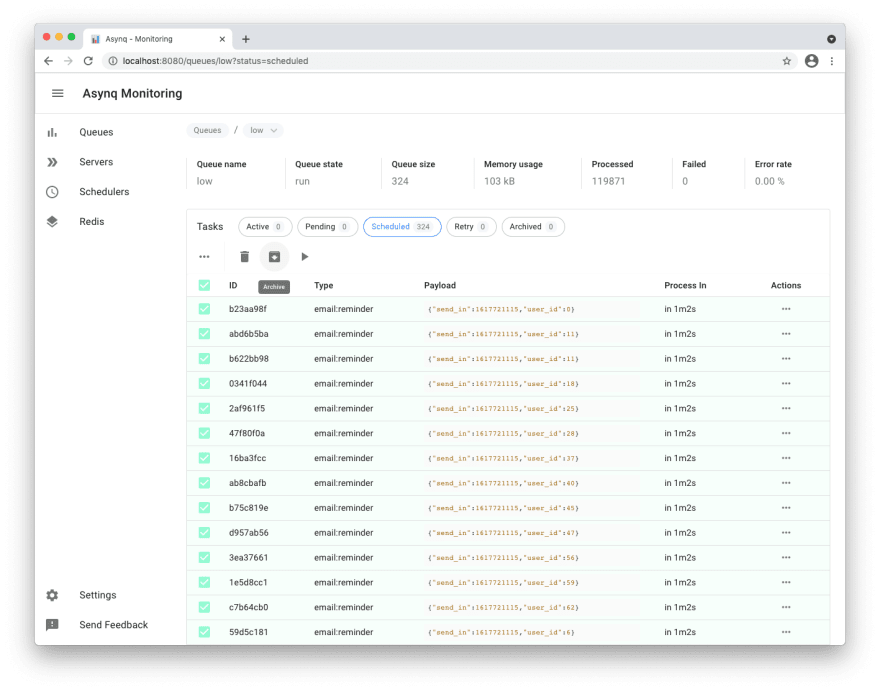

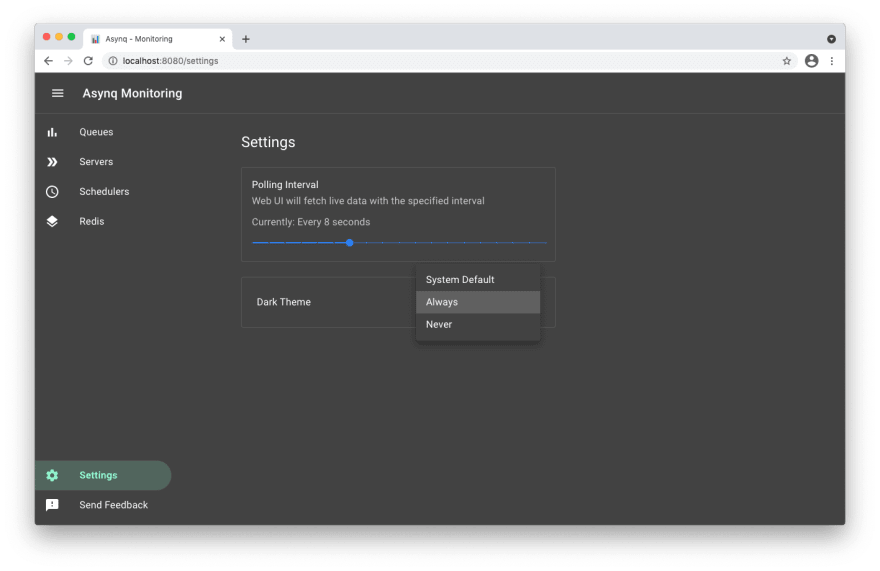

## Web UI

|

||||||

|

|

||||||

|

[Asynqmon](https://github.com/hibiken/asynqmon) is a web based tool for monitoring and administrating Asynq queues and tasks.

|

||||||

|

|

||||||

|

Here's a few screenshots of the Web UI:

|

||||||

|

|

||||||

|

**Queues view**

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

**Tasks view**

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

**Settings and adaptive dark mode**

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

For details on how to use the tool, refer to the tool's [README](https://github.com/hibiken/asynqmon#readme).

|

||||||

|

|

||||||

## Command Line Tool

|

## Command Line Tool

|

||||||

|

|

||||||

Asynq ships with a command line tool to inspect the state of queues and tasks.

|

Asynq ships with a command line tool to inspect the state of queues and tasks.

|

||||||

|

|

||||||

Here's an example of running the `stats` command.

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

For details on how to use the tool, refer to the tool's [README](/tools/asynq/README.md).

|

|

||||||

|

|

||||||

## Installation

|

|

||||||

|

|

||||||

To install `asynq` library, run the following command:

|

|

||||||

|

|

||||||

```sh

|

|

||||||

go get -u github.com/hibiken/asynq

|

|

||||||

```

|

|

||||||

|

|

||||||

To install the CLI tool, run the following command:

|

To install the CLI tool, run the following command:

|

||||||

|

|

||||||

```sh

|

```sh

|

||||||

go get -u github.com/hibiken/asynq/tools/asynq

|

go get -u github.com/hibiken/asynq/tools/asynq

|

||||||

```

|

```

|

||||||

|

|

||||||

## Requirements

|

Here's an example of running the `asynq stats` command:

|

||||||

|

|

||||||

| Dependency | Version |

|

|

||||||

| -------------------------- | ------- |

|

|

||||||

| [Redis](https://redis.io/) | v3.0+ |

|

For details on how to use the tool, refer to the tool's [README](/tools/asynq/README.md).

|

||||||

| [Go](https://golang.org/) | v1.13+ |

|

|

||||||

|

|

||||||

## Contributing

|

## Contributing

|

||||||

|

|

||||||

We are open to, and grateful for, any contributions (Github issues/pull-requests, feedback on Gitter channel, etc) made by the community.

|

We are open to, and grateful for, any contributions (GitHub issues/PRs, feedback on [Gitter channel](https://gitter.im/go-asynq/community), etc) made by the community.

|

||||||

|

|

||||||

Please see the [Contribution Guide](/CONTRIBUTING.md) before contributing.

|

Please see the [Contribution Guide](/CONTRIBUTING.md) before contributing.

|

||||||

|

|

||||||

## Acknowledgements

|

|

||||||

|

|

||||||

- [Sidekiq](https://github.com/mperham/sidekiq) : Many of the design ideas are taken from sidekiq and its Web UI

|

|

||||||

- [RQ](https://github.com/rq/rq) : Client APIs are inspired by rq library.

|

|

||||||

- [Cobra](https://github.com/spf13/cobra) : Asynq CLI is built with cobra

|

|

||||||

|

|

||||||

## License

|

## License

|

||||||

|

|

||||||

Asynq is released under the MIT license. See [LICENSE](https://github.com/hibiken/asynq/blob/master/LICENSE).

|

Copyright (c) 2019-present [Ken Hibino](https://github.com/hibiken) and [Contributors](https://github.com/hibiken/asynq/graphs/contributors). `Asynq` is free and open-source software licensed under the [MIT License](https://github.com/hibiken/asynq/blob/master/LICENSE). Official logo was created by [Vic Shóstak](https://github.com/koddr) and distributed under [Creative Commons](https://creativecommons.org/publicdomain/zero/1.0/) license (CC0 1.0 Universal).

|

||||||

|

|||||||

320

asynq.go

320

asynq.go

@@ -10,29 +10,151 @@ import (

|

|||||||

"net/url"

|

"net/url"

|

||||||

"strconv"

|

"strconv"

|

||||||

"strings"

|

"strings"

|

||||||

|

"time"

|

||||||

|

|

||||||

"github.com/go-redis/redis/v7"

|

"github.com/go-redis/redis/v7"

|

||||||

|

"github.com/hibiken/asynq/internal/base"

|

||||||

)

|

)

|

||||||

|

|

||||||

// Task represents a unit of work to be performed.

|

// Task represents a unit of work to be performed.

|

||||||

type Task struct {

|

type Task struct {

|

||||||

// Type indicates the type of task to be performed.

|

// typename indicates the type of task to be performed.

|

||||||

Type string

|

typename string

|

||||||

|

|

||||||

// Payload holds data needed to perform the task.

|

// payload holds data needed to perform the task.

|

||||||

Payload Payload

|

payload []byte

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func (t *Task) Type() string { return t.typename }

|

||||||

|

func (t *Task) Payload() []byte { return t.payload }

|

||||||

|

|

||||||

// NewTask returns a new Task given a type name and payload data.

|

// NewTask returns a new Task given a type name and payload data.

|

||||||

//

|

func NewTask(typename string, payload []byte) *Task {

|

||||||

// The payload values must be serializable.

|

|

||||||

func NewTask(typename string, payload map[string]interface{}) *Task {

|

|

||||||

return &Task{

|

return &Task{

|

||||||

Type: typename,

|

typename: typename,

|

||||||

Payload: Payload{payload},

|

payload: payload,

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

// A TaskInfo describes a task and its metadata.

|

||||||

|

type TaskInfo struct {

|

||||||

|

// ID is the identifier of the task.

|

||||||

|

ID string

|

||||||

|

|

||||||

|

// Queue is the name of the queue in which the task belongs.

|

||||||

|

Queue string

|

||||||

|

|

||||||

|

// Type is the type name of the task.

|

||||||

|

Type string

|

||||||

|

|

||||||

|

// Payload is the payload data of the task.

|

||||||

|

Payload []byte

|

||||||

|

|

||||||

|

// State indicates the task state.

|

||||||

|

State TaskState

|

||||||

|

|

||||||

|

// MaxRetry is the maximum number of times the task can be retried.

|

||||||

|

MaxRetry int

|

||||||

|

|

||||||

|

// Retried is the number of times the task has retried so far.

|

||||||

|

Retried int

|

||||||

|

|

||||||

|

// LastErr is the error message from the last failure.

|

||||||

|

LastErr string

|

||||||

|

|

||||||

|

// LastFailedAt is the time time of the last failure if any.

|

||||||

|

// If the task has no failures, LastFailedAt is zero time (i.e. time.Time{}).

|

||||||

|

LastFailedAt time.Time

|

||||||

|

|

||||||

|

// Timeout is the duration the task can be processed by Handler before being retried,

|

||||||

|

// zero if not specified

|

||||||

|

Timeout time.Duration

|

||||||

|

|

||||||

|

// Deadline is the deadline for the task, zero value if not specified.

|

||||||

|

Deadline time.Time

|

||||||

|

|

||||||

|

// NextProcessAt is the time the task is scheduled to be processed,

|

||||||

|

// zero if not applicable.

|

||||||

|

NextProcessAt time.Time

|

||||||

|

}

|

||||||

|

|

||||||

|

func newTaskInfo(msg *base.TaskMessage, state base.TaskState, nextProcessAt time.Time) *TaskInfo {

|

||||||

|

info := TaskInfo{

|

||||||

|

ID: msg.ID.String(),

|

||||||

|

Queue: msg.Queue,

|

||||||

|

Type: msg.Type,

|

||||||

|

Payload: msg.Payload, // Do we need to make a copy?

|

||||||

|

MaxRetry: msg.Retry,

|

||||||

|

Retried: msg.Retried,

|

||||||

|

LastErr: msg.ErrorMsg,

|

||||||

|

Timeout: time.Duration(msg.Timeout) * time.Second,

|

||||||

|

NextProcessAt: nextProcessAt,

|

||||||

|

}

|

||||||

|

if msg.LastFailedAt == 0 {

|

||||||

|

info.LastFailedAt = time.Time{}

|

||||||

|

} else {

|

||||||

|

info.LastFailedAt = time.Unix(msg.LastFailedAt, 0)

|

||||||

|

}

|

||||||

|

|

||||||

|

if msg.Deadline == 0 {

|

||||||

|

info.Deadline = time.Time{}

|

||||||

|

} else {

|

||||||

|

info.Deadline = time.Unix(msg.Deadline, 0)

|

||||||

|

}

|

||||||

|

|

||||||

|

switch state {

|

||||||

|

case base.TaskStateActive:

|

||||||

|

info.State = TaskStateActive

|

||||||

|

case base.TaskStatePending:

|

||||||

|

info.State = TaskStatePending

|

||||||

|

case base.TaskStateScheduled:

|

||||||

|

info.State = TaskStateScheduled

|

||||||

|

case base.TaskStateRetry:

|

||||||

|

info.State = TaskStateRetry

|

||||||

|

case base.TaskStateArchived:

|

||||||

|

info.State = TaskStateArchived

|

||||||

|

default:

|

||||||

|

panic(fmt.Sprintf("internal error: unknown state: %d", state))

|

||||||

|

}

|

||||||

|

return &info

|

||||||

|

}

|

||||||

|

|

||||||

|

// TaskState denotes the state of a task.

|

||||||

|

type TaskState int

|

||||||

|

|

||||||

|

const (

|

||||||

|

// Indicates that the task is currently being processed by Handler.

|

||||||

|

TaskStateActive TaskState = iota + 1

|

||||||

|

|

||||||

|

// Indicates that the task is ready to be processed by Handler.

|

||||||

|

TaskStatePending

|

||||||

|

|

||||||

|

// Indicates that the task is scheduled to be processed some time in the future.

|

||||||

|

TaskStateScheduled

|

||||||

|

|

||||||

|

// Indicates that the task has previously failed and scheduled to be processed some time in the future.

|

||||||

|

TaskStateRetry

|

||||||

|

|

||||||

|

// Indicates that the task is archived and stored for inspection purposes.

|

||||||

|

TaskStateArchived

|

||||||

|

)

|

||||||

|

|

||||||

|

func (s TaskState) String() string {

|

||||||

|

switch s {

|

||||||

|

case TaskStateActive:

|

||||||

|

return "active"

|

||||||

|

case TaskStatePending:

|

||||||

|

return "pending"

|

||||||

|

case TaskStateScheduled:

|

||||||

|

return "scheduled"

|

||||||

|

case TaskStateRetry:

|

||||||

|

return "retry"

|

||||||

|

case TaskStateArchived:

|

||||||

|

return "archived"

|

||||||

|

}

|

||||||

|

panic("asynq: unknown task state")

|

||||||

|

}

|

||||||

|

|

||||||

// RedisConnOpt is a discriminated union of types that represent Redis connection configuration option.

|

// RedisConnOpt is a discriminated union of types that represent Redis connection configuration option.

|

||||||

//

|

//

|

||||||

// RedisConnOpt represents a sum of following types:

|

// RedisConnOpt represents a sum of following types:

|

||||||

@@ -40,7 +162,11 @@ func NewTask(typename string, payload map[string]interface{}) *Task {

|

|||||||

// - RedisClientOpt

|

// - RedisClientOpt

|

||||||

// - RedisFailoverClientOpt

|

// - RedisFailoverClientOpt

|

||||||

// - RedisClusterClientOpt

|

// - RedisClusterClientOpt

|

||||||

type RedisConnOpt interface{}

|

type RedisConnOpt interface {

|

||||||

|

// MakeRedisClient returns a new redis client instance.

|

||||||

|

// Return value is intentionally opaque to hide the implementation detail of redis client.

|

||||||

|

MakeRedisClient() interface{}

|

||||||

|

}

|

||||||

|

|

||||||

// RedisClientOpt is used to create a redis client that connects

|

// RedisClientOpt is used to create a redis client that connects

|

||||||

// to a redis server directly.

|

// to a redis server directly.

|

||||||

@@ -64,6 +190,26 @@ type RedisClientOpt struct {

|

|||||||

// See: https://redis.io/commands/select.

|

// See: https://redis.io/commands/select.

|

||||||

DB int

|

DB int

|

||||||

|

|

||||||

|

// Dial timeout for establishing new connections.

|

||||||

|

// Default is 5 seconds.

|

||||||

|

DialTimeout time.Duration

|

||||||

|

|

||||||

|

// Timeout for socket reads.

|

||||||

|

// If timeout is reached, read commands will fail with a timeout error

|

||||||

|

// instead of blocking.

|

||||||

|

//

|

||||||

|

// Use value -1 for no timeout and 0 for default.

|

||||||

|

// Default is 3 seconds.

|

||||||

|

ReadTimeout time.Duration

|

||||||

|

|

||||||

|

// Timeout for socket writes.

|

||||||

|

// If timeout is reached, write commands will fail with a timeout error

|

||||||

|

// instead of blocking.

|

||||||

|

//

|

||||||

|

// Use value -1 for no timeout and 0 for default.

|

||||||

|

// Default is ReadTimout.

|

||||||

|

WriteTimeout time.Duration

|

||||||

|

|

||||||

// Maximum number of socket connections.

|

// Maximum number of socket connections.

|

||||||

// Default is 10 connections per every CPU as reported by runtime.NumCPU.

|

// Default is 10 connections per every CPU as reported by runtime.NumCPU.

|

||||||

PoolSize int

|

PoolSize int

|

||||||

@@ -73,6 +219,21 @@ type RedisClientOpt struct {

|

|||||||

TLSConfig *tls.Config

|

TLSConfig *tls.Config

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func (opt RedisClientOpt) MakeRedisClient() interface{} {

|

||||||

|

return redis.NewClient(&redis.Options{

|

||||||

|

Network: opt.Network,

|

||||||

|

Addr: opt.Addr,

|

||||||

|

Username: opt.Username,

|

||||||

|

Password: opt.Password,

|

||||||

|