mirror of

https://github.com/hibiken/asynq.git

synced 2025-10-22 09:56:12 +08:00

Compare commits

350 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

a19909f5f4 | ||

|

|

cea5110d15 | ||

|

|

9b63e23274 | ||

|

|

de25201d9f | ||

|

|

ec560afb01 | ||

|

|

d4006894ad | ||

|

|

59927509d8 | ||

|

|

8211167de2 | ||

|

|

d7169cd445 | ||

|

|

dfae8638e1 | ||

|

|

b9943de2ab | ||

|

|

871474f220 | ||

|

|

87dc392c7f | ||

|

|

dabcb120d5 | ||

|

|

bc2f1986d7 | ||

|

|

b8cb579407 | ||

|

|

bca624792c | ||

|

|

d865d89900 | ||

|

|

852af7abd1 | ||

|

|

5490d2c625 | ||

|

|

ebd7a32c0f | ||

|

|

55d0610a03 | ||

|

|

ab8a4f5b1e | ||

|

|

d7ceb0c090 | ||

|

|

8bd70c6f84 | ||

|

|

10ab4e3745 | ||

|

|

349f4c50fb | ||

|

|

dff2e3a336 | ||

|

|

65040af7b5 | ||

|

|

053fe2d1ee | ||

|

|

25832e5e95 | ||

|

|

aa26f3819e | ||

|

|

d94614bb9b | ||

|

|

ce46b07652 | ||

|

|

2d0170541c | ||

|

|

c1f08106da | ||

|

|

74cf804197 | ||

|

|

8dfabfccb3 | ||

|

|

5f20edcbd1 | ||

|

|

1ddb2f7bce | ||

|

|

82d18e3d91 | ||

|

|

43cb4ddf19 | ||

|

|

ddfc6747a1 | ||

|

|

970cb7a606 | ||

|

|

157e97e72e | ||

|

|

22e6c9d297 | ||

|

|

99a6750656 | ||

|

|

e7c1c3ad6f | ||

|

|

c9183374c5 | ||

|

|

6e7106c8f2 | ||

|

|

9f2c321e98 | ||

|

|

e2b61c9056 | ||

|

|

531d1ef089 | ||

|

|

413afc2ab6 | ||

|

|

6bb4818509 | ||

|

|

f4ddac4dcc | ||

|

|

4638405cbd | ||

|

|

9e2f88c00d | ||

|

|

dbdd9c6d5f | ||

|

|

2261c7c9a0 | ||

|

|

83cae4bb24 | ||

|

|

23c522dc9f | ||

|

|

0d2c0f612b | ||

|

|

d612a8a9e4 | ||

|

|

b3ef9e91a9 | ||

|

|

05534c6f24 | ||

|

|

f0db219f6a | ||

|

|

3ae0e7f528 | ||

|

|

421dc584ff | ||

|

|

cfd1a1dfe8 | ||

|

|

c197902dc0 | ||

|

|

e6355bf3f5 | ||

|

|

95c90a5cb8 | ||

|

|

6817af366a | ||

|

|

4bce28d677 | ||

|

|

73f930313c | ||

|

|

bff2a05d59 | ||

|

|

684a7e0c98 | ||

|

|

46b23d6495 | ||

|

|

c0ae62499f | ||

|

|

7744ade362 | ||

|

|

f532c95394 | ||

|

|

ff6768f9bb | ||

|

|

d5e9f3b1bd | ||

|

|

d02b722d8a | ||

|

|

99c7ebeef2 | ||

|

|

bf54621196 | ||

|

|

27baf6de0d | ||

|

|

1bd0bee1e5 | ||

|

|

a9feec5967 | ||

|

|

e01c6379c8 | ||

|

|

a0df047f71 | ||

|

|

68dd6d9a9d | ||

|

|

6cce31a134 | ||

|

|

f9d7af3def | ||

|

|

b0321fb465 | ||

|

|

7776c7ae53 | ||

|

|

709ca79a2b | ||

|

|

08d8f0b37c | ||

|

|

385323b679 | ||

|

|

77604af265 | ||

|

|

4765742e8a | ||

|

|

68839dc9d3 | ||

|

|

8922d2423a | ||

|

|

b358de907e | ||

|

|

8ee1825e67 | ||

|

|

c8bda26bed | ||

|

|

8aeeb61c9d | ||

|

|

96c51fdc23 | ||

|

|

ea9086fd8b | ||

|

|

e63d51da0c | ||

|

|

cd351d49b9 | ||

|

|

87264b66f3 | ||

|

|

62168b8d0d | ||

|

|

840f7245b1 | ||

|

|

12f4c7cf6e | ||

|

|

0ec3b55e6b | ||

|

|

4bcc5ab6aa | ||

|

|

456edb6b71 | ||

|

|

b835090ad8 | ||

|

|

09cbea66f6 | ||

|

|

b9c2572203 | ||

|

|

0bf767cf21 | ||

|

|

1812d05d21 | ||

|

|

4af65d5fa5 | ||

|

|

a19ad19382 | ||

|

|

8117ce8972 | ||

|

|

d98ecdebb4 | ||

|

|

ffe9aa74b3 | ||

|

|

d2d4029aba | ||

|

|

76bd865ebc | ||

|

|

136d1c9ea9 | ||

|

|

52e04355d3 | ||

|

|

cde3e57c6c | ||

|

|

dd66acef1b | ||

|

|

30a3d9641a | ||

|

|

961582cba6 | ||

|

|

430dbb298e | ||

|

|

675826be5f | ||

|

|

62f4e46b73 | ||

|

|

a500f8a534 | ||

|

|

bcfeff38ed | ||

|

|

12a90f6a8d | ||

|

|

807624e7dd | ||

|

|

4d65024bd7 | ||

|

|

76486b5cb4 | ||

|

|

1db516c53c | ||

|

|

cb5bdf245c | ||

|

|

267493ccef | ||

|

|

5d7f1b6a80 | ||

|

|

77ded502ab | ||

|

|

f2284be43d | ||

|

|

3cadab55cb | ||

|

|

298a420f9f | ||

|

|

b1d717c842 | ||

|

|

56e5762eea | ||

|

|

5ec41e388b | ||

|

|

9c95c41651 | ||

|

|

476812475e | ||

|

|

7af3981929 | ||

|

|

2516c4baba | ||

|

|

ebe482a65c | ||

|

|

3e9fc2f972 | ||

|

|

63ce9ed0f9 | ||

|

|

32d3f329b9 | ||

|

|

544c301a8b | ||

|

|

8b997d2fab | ||

|

|

901105a8d7 | ||

|

|

aaa3f1d4fd | ||

|

|

4722ca2d3d | ||

|

|

6a9d9fd717 | ||

|

|

de28c1ea19 | ||

|

|

f618f5b1f5 | ||

|

|

aa936466b3 | ||

|

|

5d1ec70544 | ||

|

|

d1d3be9b00 | ||

|

|

bc77f6fe14 | ||

|

|

efe197a47b | ||

|

|

97b5516183 | ||

|

|

8eafa03ca7 | ||

|

|

430b01c9aa | ||

|

|

14c381dc40 | ||

|

|

e13122723a | ||

|

|

eba7c4e085 | ||

|

|

bfde0b6283 | ||

|

|

afde6a7266 | ||

|

|

6529a1e0b1 | ||

|

|

c9a6ab8ae1 | ||

|

|

557c1a5044 | ||

|

|

0236eb9a1c | ||

|

|

3c2b2cf4a3 | ||

|

|

04df71198d | ||

|

|

2884044e75 | ||

|

|

3719fad396 | ||

|

|

42c7ac0746 | ||

|

|

d331ff055d | ||

|

|

ccb682853e | ||

|

|

7c3ad9e45c | ||

|

|

ea23db4f6b | ||

|

|

00a25ca570 | ||

|

|

7235041128 | ||

|

|

a150d18ed7 | ||

|

|

0712e90f23 | ||

|

|

c5100a9c23 | ||

|

|

196d66f221 | ||

|

|

38509e309f | ||

|

|

f4dd8fe962 | ||

|

|

c06e9de97d | ||

|

|

52d536a8f5 | ||

|

|

f9c0673116 | ||

|

|

b604d25937 | ||

|

|

dfdf530a24 | ||

|

|

e9239260ae | ||

|

|

8f9d5a3352 | ||

|

|

c4dc993241 | ||

|

|

37dfd746d4 | ||

|

|

8d6e4167ab | ||

|

|

476862dd7b | ||

|

|

dcd873fa2a | ||

|

|

2604bb2192 | ||

|

|

942345ee80 | ||

|

|

1f059eeee1 | ||

|

|

4ae73abdaa | ||

|

|

96b2318300 | ||

|

|

8312515e64 | ||

|

|

50e7f38365 | ||

|

|

fadcae76d6 | ||

|

|

a2d4ead989 | ||

|

|

82b6828f43 | ||

|

|

3114987428 | ||

|

|

1ee3b10104 | ||

|

|

6d720d6a05 | ||

|

|

3e6981170d | ||

|

|

a9aa480551 | ||

|

|

9d41de795a | ||

|

|

c43fb21a0a | ||

|

|

a293efcdab | ||

|

|

69d7ec725a | ||

|

|

450a9aa1e2 | ||

|

|

6e294a7013 | ||

|

|

c26b7469bd | ||

|

|

818c2d6f35 | ||

|

|

e09870a08a | ||

|

|

ac3d5b126a | ||

|

|

29e542e591 | ||

|

|

a891ce5568 | ||

|

|

ebe3c4083f | ||

|

|

c8c47fcbf0 | ||

|

|

cca680a7fd | ||

|

|

8076b5ae50 | ||

|

|

a42c174dae | ||

|

|

a88325cb96 | ||

|

|

eb739a0258 | ||

|

|

a9c31553b8 | ||

|

|

dab8295883 | ||

|

|

131ac823fd | ||

|

|

4897dba397 | ||

|

|

6b96459881 | ||

|

|

572eb338d5 | ||

|

|

27f4027447 | ||

|

|

ee1afd12f5 | ||

|

|

3ac548e97c | ||

|

|

f38f94b947 | ||

|

|

d6f389e63f | ||

|

|

118ef27bf2 | ||

|

|

fad0696828 | ||

|

|

4037b41479 | ||

|

|

96f23d88cd | ||

|

|

83bdca5220 | ||

|

|

2f226dfb84 | ||

|

|

3f26122ac0 | ||

|

|

2a18181501 | ||

|

|

aa2676bb57 | ||

|

|

9348a62691 | ||

|

|

f59de9ac56 | ||

|

|

996a6c0ead | ||

|

|

47e9ba4eba | ||

|

|

dbf140a767 | ||

|

|

5f82b4b365 | ||

|

|

44a3d177f0 | ||

|

|

24b13bd865 | ||

|

|

d25090c669 | ||

|

|

b5caefd663 | ||

|

|

becd26479b | ||

|

|

4b81b91d3e | ||

|

|

8e23b865e9 | ||

|

|

a873d488ee | ||

|

|

e0a8f1252a | ||

|

|

650d7fdbe9 | ||

|

|

f6d504939e | ||

|

|

74f08795f8 | ||

|

|

35b2b1782e | ||

|

|

f63dcce0c0 | ||

|

|

565f86ee4f | ||

|

|

94aa878060 | ||

|

|

50b6034bf9 | ||

|

|

154113d0d0 | ||

|

|

669c7995c4 | ||

|

|

6d6a301379 | ||

|

|

53f9475582 | ||

|

|

e8fdbc5a72 | ||

|

|

5f06c308f0 | ||

|

|

a913e6d73f | ||

|

|

6978e93080 | ||

|

|

92d77bbc6e | ||

|

|

a28f61f313 | ||

|

|

9bd3d8e19e | ||

|

|

7382e2aeb8 | ||

|

|

007fac8055 | ||

|

|

8d43fe407a | ||

|

|

34b90ecc8a | ||

|

|

8b60e6a268 | ||

|

|

486dcd799b | ||

|

|

195f4603bb | ||

|

|

2e2c9b9f6b | ||

|

|

199bf4d66a | ||

|

|

7e942ec241 | ||

|

|

379da8f7a2 | ||

|

|

feee87adda | ||

|

|

7657f560ec | ||

|

|

7c7de0d8e0 | ||

|

|

83f1e20d74 | ||

|

|

4e8ac151ae | ||

|

|

08b71672aa | ||

|

|

92af00f9fd | ||

|

|

113451ce6a | ||

|

|

9cd9f3d6b4 | ||

|

|

7b9119c703 | ||

|

|

9b05dea394 | ||

|

|

6cc5bafaba | ||

|

|

716d3d987e | ||

|

|

0527b93432 | ||

|

|

5dddc35d7c | ||

|

|

4e5f596910 | ||

|

|

8bf5917cd9 | ||

|

|

7f30fa2bb6 | ||

|

|

ade6e61f51 | ||

|

|

a2abeedaa0 | ||

|

|

81bb52b08c | ||

|

|

bc2a7635a0 | ||

|

|

f65d408bf9 | ||

|

|

4749b4bbfc | ||

|

|

06c4a1c7f8 | ||

|

|

8af4cbad51 | ||

|

|

4e800a7f68 | ||

|

|

d6a5c84dc6 | ||

|

|

363cfedb49 | ||

|

|

4595bd41c3 | ||

|

|

e236d55477 | ||

|

|

a38f628f3b |

12

.github/FUNDING.yml

vendored

Normal file

12

.github/FUNDING.yml

vendored

Normal file

@@ -0,0 +1,12 @@

|

|||||||

|

# These are supported funding model platforms

|

||||||

|

|

||||||

|

github: [hibiken] # Replace with up to 4 GitHub Sponsors-enabled usernames e.g., [user1, user2]

|

||||||

|

patreon: # Replace with a single Patreon username

|

||||||

|

open_collective: # Replace with a single Open Collective username

|

||||||

|

ko_fi: # Replace with a single Ko-fi username

|

||||||

|

tidelift: # Replace with a single Tidelift platform-name/package-name e.g., npm/babel

|

||||||

|

community_bridge: # Replace with a single Community Bridge project-name e.g., cloud-foundry

|

||||||

|

liberapay: # Replace with a single Liberapay username

|

||||||

|

issuehunt: # Replace with a single IssueHunt username

|

||||||

|

otechie: # Replace with a single Otechie username

|

||||||

|

custom: # Replace with up to 4 custom sponsorship URLs e.g., ['link1', 'link2']

|

||||||

82

.github/workflows/benchstat.yml

vendored

Normal file

82

.github/workflows/benchstat.yml

vendored

Normal file

@@ -0,0 +1,82 @@

|

|||||||

|

# This workflow runs benchmarks against the current branch,

|

||||||

|

# compares them to benchmarks against master,

|

||||||

|

# and uploads the results as an artifact.

|

||||||

|

|

||||||

|

name: benchstat

|

||||||

|

|

||||||

|

on: [pull_request]

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

incoming:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

services:

|

||||||

|

redis:

|

||||||

|

image: redis

|

||||||

|

ports:

|

||||||

|

- 6379:6379

|

||||||

|

steps:

|

||||||

|

- name: Checkout

|

||||||

|

uses: actions/checkout@v2

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v2

|

||||||

|

with:

|

||||||

|

go-version: 1.16.x

|

||||||

|

- name: Benchmark

|

||||||

|

run: go test -run=^$ -bench=. -count=5 -timeout=60m ./... | tee -a new.txt

|

||||||

|

- name: Upload Benchmark

|

||||||

|

uses: actions/upload-artifact@v2

|

||||||

|

with:

|

||||||

|

name: bench-incoming

|

||||||

|

path: new.txt

|

||||||

|

|

||||||

|

current:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

services:

|

||||||

|

redis:

|

||||||

|

image: redis

|

||||||

|

ports:

|

||||||

|

- 6379:6379

|

||||||

|

steps:

|

||||||

|

- name: Checkout

|

||||||

|

uses: actions/checkout@v2

|

||||||

|

with:

|

||||||

|

ref: master

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v2

|

||||||

|

with:

|

||||||

|

go-version: 1.15.x

|

||||||

|

- name: Benchmark

|

||||||

|

run: go test -run=^$ -bench=. -count=5 -timeout=60m ./... | tee -a old.txt

|

||||||

|

- name: Upload Benchmark

|

||||||

|

uses: actions/upload-artifact@v2

|

||||||

|

with:

|

||||||

|

name: bench-current

|

||||||

|

path: old.txt

|

||||||

|

|

||||||

|

benchstat:

|

||||||

|

needs: [incoming, current]

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

steps:

|

||||||

|

- name: Checkout

|

||||||

|

uses: actions/checkout@v2

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v2

|

||||||

|

with:

|

||||||

|

go-version: 1.15.x

|

||||||

|

- name: Install benchstat

|

||||||

|

run: go get -u golang.org/x/perf/cmd/benchstat

|

||||||

|

- name: Download Incoming

|

||||||

|

uses: actions/download-artifact@v2

|

||||||

|

with:

|

||||||

|

name: bench-incoming

|

||||||

|

- name: Download Current

|

||||||

|

uses: actions/download-artifact@v2

|

||||||

|

with:

|

||||||

|

name: bench-current

|

||||||

|

- name: Benchstat Results

|

||||||

|

run: benchstat old.txt new.txt | tee -a benchstat.txt

|

||||||

|

- name: Upload benchstat results

|

||||||

|

uses: actions/upload-artifact@v2

|

||||||

|

with:

|

||||||

|

name: benchstat

|

||||||

|

path: benchstat.txt

|

||||||

44

.github/workflows/build.yml

vendored

Normal file

44

.github/workflows/build.yml

vendored

Normal file

@@ -0,0 +1,44 @@

|

|||||||

|

name: build

|

||||||

|

|

||||||

|

on: [push, pull_request]

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

build:

|

||||||

|

strategy:

|

||||||

|

matrix:

|

||||||

|

os: [ubuntu-latest]

|

||||||

|

go-version: [1.14.x, 1.15.x, 1.16.x, 1.17.x]

|

||||||

|

runs-on: ${{ matrix.os }}

|

||||||

|

services:

|

||||||

|

redis:

|

||||||

|

image: redis

|

||||||

|

ports:

|

||||||

|

- 6379:6379

|

||||||

|

steps:

|

||||||

|

- uses: actions/checkout@v2

|

||||||

|

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v2

|

||||||

|

with:

|

||||||

|

go-version: ${{ matrix.go-version }}

|

||||||

|

|

||||||

|

- name: Build core module

|

||||||

|

run: go build -v ./...

|

||||||

|

|

||||||

|

- name: Build x module

|

||||||

|

run: cd x && go build -v ./... && cd ..

|

||||||

|

|

||||||

|

- name: Build tools module

|

||||||

|

run: cd tools && go build -v ./... && cd ..

|

||||||

|

|

||||||

|

- name: Test core module

|

||||||

|

run: go test -race -v -coverprofile=coverage.txt -covermode=atomic ./...

|

||||||

|

|

||||||

|

- name: Test x module

|

||||||

|

run: cd x && go test -race -v ./... && cd ..

|

||||||

|

|

||||||

|

- name: Benchmark Test

|

||||||

|

run: go test -run=^$ -bench=. -loglevel=debug ./...

|

||||||

|

|

||||||

|

- name: Upload coverage to Codecov

|

||||||

|

uses: codecov/codecov-action@v1

|

||||||

8

.gitignore

vendored

8

.gitignore

vendored

@@ -1,3 +1,4 @@

|

|||||||

|

vendor

|

||||||

# Binaries for programs and plugins

|

# Binaries for programs and plugins

|

||||||

*.exe

|

*.exe

|

||||||

*.exe~

|

*.exe~

|

||||||

@@ -14,8 +15,13 @@

|

|||||||

# Ignore examples for now

|

# Ignore examples for now

|

||||||

/examples

|

/examples

|

||||||

|

|

||||||

# Ignore command binary

|

# Ignore tool binaries

|

||||||

/tools/asynq/asynq

|

/tools/asynq/asynq

|

||||||

|

/tools/metrics_exporter/metrics_exporter

|

||||||

|

|

||||||

# Ignore asynq config file

|

# Ignore asynq config file

|

||||||

.asynq.*

|

.asynq.*

|

||||||

|

|

||||||

|

# Ignore editor config files

|

||||||

|

.vscode

|

||||||

|

.idea

|

||||||

|

|||||||

12

.travis.yml

12

.travis.yml

@@ -1,12 +0,0 @@

|

|||||||

language: go

|

|

||||||

go_import_path: github.com/hibiken/asynq

|

|

||||||

git:

|

|

||||||

depth: 1

|

|

||||||

go: [1.13.x, 1.14.x]

|

|

||||||

script:

|

|

||||||

- go test -race -v -coverprofile=coverage.txt -covermode=atomic ./...

|

|

||||||

services:

|

|

||||||

- redis-server

|

|

||||||

after_success:

|

|

||||||

- bash ./.travis/benchcmp.sh

|

|

||||||

- bash <(curl -s https://codecov.io/bash)

|

|

||||||

@@ -1,15 +0,0 @@

|

|||||||

if [ "${TRAVIS_PULL_REQUEST_BRANCH:-$TRAVIS_BRANCH}" != "master" ]; then

|

|

||||||

REMOTE_URL="$(git config --get remote.origin.url)";

|

|

||||||

cd ${TRAVIS_BUILD_DIR}/.. && \

|

|

||||||

git clone ${REMOTE_URL} "${TRAVIS_REPO_SLUG}-bench" && \

|

|

||||||

cd "${TRAVIS_REPO_SLUG}-bench" && \

|

|

||||||

# Benchmark master

|

|

||||||

git checkout master && \

|

|

||||||

go test -run=XXX -bench=. ./... > master.txt && \

|

|

||||||

# Benchmark feature branch

|

|

||||||

git checkout ${TRAVIS_COMMIT} && \

|

|

||||||

go test -run=XXX -bench=. ./... > feature.txt && \

|

|

||||||

go get -u golang.org/x/tools/cmd/benchcmp && \

|

|

||||||

# compare two benchmarks

|

|

||||||

benchcmp master.txt feature.txt;

|

|

||||||

fi

|

|

||||||

326

CHANGELOG.md

326

CHANGELOG.md

@@ -7,6 +7,332 @@ and this project adheres to [Semantic Versioning](https://semver.org/spec/v2.0.0

|

|||||||

|

|

||||||

## [Unreleased]

|

## [Unreleased]

|

||||||

|

|

||||||

|

## [0.21.0] - 2022-02-19

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `BaseContext` is introduced in `Config` to specify callback hook to provide a base `context` from which `Handler` `context` is derived

|

||||||

|

- `IsOrphaned` field is added to `TaskInfo` to describe a task left in active state with no worker processing it.

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- `Server` now recovers tasks with an expired lease. Recovered tasks are retried/archived with `ErrLeaseExpired` error.

|

||||||

|

|

||||||

|

## [0.21.0] - 2022-01-22

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `PeriodicTaskManager` is added. Prefer using this over `Scheduler` as it has better support for dynamic periodic tasks.

|

||||||

|

- The `asynq stats` command now supports a `--json` option, making its output a JSON object

|

||||||

|

- Introduced new configuration for `DelayedTaskCheckInterval`. See [godoc](https://godoc.org/github.com/hibiken/asynq) for more details.

|

||||||

|

|

||||||

|

## [0.20.0] - 2021-12-19

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- Package `x/metrics` is added.

|

||||||

|

- Tool `tools/metrics_exporter` binary is added.

|

||||||

|

- `ProcessedTotal` and `FailedTotal` fields were added to `QueueInfo` struct.

|

||||||

|

|

||||||

|

## [0.19.1] - 2021-12-12

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Latency` field is added to `QueueInfo`.

|

||||||

|

- `EnqueueContext` method is added to `Client`.

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fixed an error when user pass a duration less than 1s to `Unique` option

|

||||||

|

|

||||||

|

## [0.19.0] - 2021-11-06

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- `NewTask` takes `Option` as variadic argument

|

||||||

|

- Bumped minimum supported go version to 1.14 (i.e. go1.14 or higher is required).

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Retention` option is added to allow user to specify task retention duration after completion.

|

||||||

|

- `TaskID` option is added to allow user to specify task ID.

|

||||||

|

- `ErrTaskIDConflict` sentinel error value is added.

|

||||||

|

- `ResultWriter` type is added and provided through `Task.ResultWriter` method.

|

||||||

|

- `TaskInfo` has new fields `CompletedAt`, `Result` and `Retention`.

|

||||||

|

|

||||||

|

### Removed

|

||||||

|

|

||||||

|

- `Client.SetDefaultOptions` is removed. Use `NewTask` instead to pass default options for tasks.

|

||||||

|

|

||||||

|

## [0.18.6] - 2021-10-03

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- Updated `github.com/go-redis/redis` package to v8

|

||||||

|

|

||||||

|

## [0.18.5] - 2021-09-01

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `IsFailure` config option is added to determine whether error returned from Handler counts as a failure.

|

||||||

|

|

||||||

|

## [0.18.4] - 2021-08-17

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Scheduler methods are now thread-safe. It's now safe to call `Register` and `Unregister` concurrently.

|

||||||

|

|

||||||

|

## [0.18.3] - 2021-08-09

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- `Client.Enqueue` no longer enqueues tasks with empty typename; Error message is returned.

|

||||||

|

|

||||||

|

## [0.18.2] - 2021-07-15

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- Changed `Queue` function to not to convert the provided queue name to lowercase. Queue names are now case-sensitive.

|

||||||

|

- `QueueInfo.MemoryUsage` is now an approximate usage value.

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fixed latency issue around memory usage (see https://github.com/hibiken/asynq/issues/309).

|

||||||

|

|

||||||

|

## [0.18.1] - 2021-07-04

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- Changed to execute task recovering logic when server starts up; Previously it needed to wait for a minute for task recovering logic to exeucte.

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fixed task recovering logic to execute every minute

|

||||||

|

|

||||||

|

## [0.18.0] - 2021-06-29

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- NewTask function now takes array of bytes as payload.

|

||||||

|

- Task `Type` and `Payload` should be accessed by a method call.

|

||||||

|

- `Server` API has changed. Renamed `Quiet` to `Stop`. Renamed `Stop` to `Shutdown`. _Note:_ As a result of this renaming, the behavior of `Stop` has changed. Please update the exising code to call `Shutdown` where it used to call `Stop`.

|

||||||

|

- `Scheduler` API has changed. Renamed `Stop` to `Shutdown`.

|

||||||

|

- Requires redis v4.0+ for multiple field/value pair support

|

||||||

|

- `Client.Enqueue` now returns `TaskInfo`

|

||||||

|

- `Inspector.RunTaskByKey` is replaced with `Inspector.RunTask`

|

||||||

|

- `Inspector.DeleteTaskByKey` is replaced with `Inspector.DeleteTask`

|

||||||

|

- `Inspector.ArchiveTaskByKey` is replaced with `Inspector.ArchiveTask`

|

||||||

|

- `inspeq` package is removed. All types and functions from the package is moved to `asynq` package.

|

||||||

|

- `WorkerInfo` field names have changed.

|

||||||

|

- `Inspector.CancelActiveTask` is renamed to `Inspector.CancelProcessing`

|

||||||

|

|

||||||

|

## [0.17.2] - 2021-06-06

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Free unique lock when task is deleted (https://github.com/hibiken/asynq/issues/275).

|

||||||

|

|

||||||

|

## [0.17.1] - 2021-04-04

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fix bug in internal `RDB.memoryUsage` method.

|

||||||

|

|

||||||

|

## [0.17.0] - 2021-03-24

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `DialTimeout`, `ReadTimeout`, and `WriteTimeout` options are added to `RedisConnOpt`.

|

||||||

|

|

||||||

|

## [0.16.1] - 2021-03-20

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Replace `KEYS` command with `SCAN` as recommended by [redis doc](https://redis.io/commands/KEYS).

|

||||||

|

|

||||||

|

## [0.16.0] - 2021-03-10

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Unregister` method is added to `Scheduler` to remove a registered entry.

|

||||||

|

|

||||||

|

## [0.15.0] - 2021-01-31

|

||||||

|

|

||||||

|

**IMPORTATNT**: All `Inspector` related code are moved to subpackage "github.com/hibiken/asynq/inspeq"

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- `Inspector` related code are moved to subpackage "github.com/hibken/asynq/inspeq".

|

||||||

|

- `RedisConnOpt` interface has changed slightly. If you have been passing `RedisClientOpt`, `RedisFailoverClientOpt`, or `RedisClusterClientOpt` as a pointer,

|

||||||

|

update your code to pass as a value.

|

||||||

|

- `ErrorMsg` field in `RetryTask` and `ArchivedTask` was renamed to `LastError`.

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `MaxRetry`, `Retried`, `LastError` fields were added to all task types returned from `Inspector`.

|

||||||

|

- `MemoryUsage` field was added to `QueueStats`.

|

||||||

|

- `DeleteAllPendingTasks`, `ArchiveAllPendingTasks` were added to `Inspector`

|

||||||

|

- `DeleteTaskByKey` and `ArchiveTaskByKey` now supports deleting/archiving `PendingTask`.

|

||||||

|

- asynq CLI now supports deleting/archiving pending tasks.

|

||||||

|

|

||||||

|

## [0.14.1] - 2021-01-19

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- `go.mod` file for CLI

|

||||||

|

|

||||||

|

## [0.14.0] - 2021-01-14

|

||||||

|

|

||||||

|

**IMPORTATNT**: Please run `asynq migrate` command to migrate from the previous versions.

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- Renamed `DeadTask` to `ArchivedTask`.

|

||||||

|

- Renamed the operation `Kill` to `Archive` in `Inpsector`.

|

||||||

|

- Print stack trace when Handler panics.

|

||||||

|

- Include a file name and a line number in the error message when recovering from a panic.

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `DefaultRetryDelayFunc` is now a public API, which can be used in the custom `RetryDelayFunc`.

|

||||||

|

- `SkipRetry` error is added to be used as a return value from `Handler`.

|

||||||

|

- `Servers` method is added to `Inspector`

|

||||||

|

- `CancelActiveTask` method is added to `Inspector`.

|

||||||

|

- `ListSchedulerEnqueueEvents` method is added to `Inspector`.

|

||||||

|

- `SchedulerEntries` method is added to `Inspector`.

|

||||||

|

- `DeleteQueue` method is added to `Inspector`.

|

||||||

|

|

||||||

|

## [0.13.1] - 2020-11-22

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fixed processor to wait for specified time duration before forcefully shutdown workers.

|

||||||

|

|

||||||

|

## [0.13.0] - 2020-10-13

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Scheduler` type is added to enable periodic tasks. See the godoc for its APIs and [wiki](https://github.com/hibiken/asynq/wiki/Periodic-Tasks) for the getting-started guide.

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- interface `Option` has changed. See the godoc for the new interface.

|

||||||

|

This change would have no impact as long as you are using exported functions (e.g. `MaxRetry`, `Queue`, etc)

|

||||||

|

to create `Option`s.

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Payload.String() string` method is added

|

||||||

|

- `Payload.MarshalJSON() ([]byte, error)` method is added

|

||||||

|

|

||||||

|

## [0.12.0] - 2020-09-12

|

||||||

|

|

||||||

|

**IMPORTANT**: If you are upgrading from a previous version, please install the latest version of the CLI `go get -u github.com/hibiken/asynq/tools/asynq` and run `asynq migrate` command. No process should be writing to Redis while you run the migration command.

|

||||||

|

|

||||||

|

## The semantics of queue have changed

|

||||||

|

|

||||||

|

Previously, we called tasks that are ready to be processed _"Enqueued tasks"_, and other tasks that are scheduled to be processed in the future _"Scheduled tasks"_, etc.

|

||||||

|

We changed the semantics of _"Enqueue"_ slightly; All tasks that client pushes to Redis are _Enqueued_ to a queue. Within a queue, tasks will transition from one state to another.

|

||||||

|

Possible task states are:

|

||||||

|

|

||||||

|

- `Pending`: task is ready to be processed (previously called "Enqueued")

|

||||||

|

- `Active`: tasks is currently being processed (previously called "InProgress")

|

||||||

|

- `Scheduled`: task is scheduled to be processed in the future

|

||||||

|

- `Retry`: task failed to be processed and will be retried again in the future

|

||||||

|

- `Dead`: task has exhausted all of its retries and stored for manual inspection purpose

|

||||||

|

|

||||||

|

**These semantics change is reflected in the new `Inspector` API and CLI commands.**

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

#### `Client`

|

||||||

|

|

||||||

|

Use `ProcessIn` or `ProcessAt` option to schedule a task instead of `EnqueueIn` or `EnqueueAt`.

|

||||||

|

|

||||||

|

| Previously | v0.12.0 |

|

||||||

|

| --------------------------- | ------------------------------------------ |

|

||||||

|

| `client.EnqueueAt(t, task)` | `client.Enqueue(task, asynq.ProcessAt(t))` |

|

||||||

|

| `client.EnqueueIn(d, task)` | `client.Enqueue(task, asynq.ProcessIn(d))` |

|

||||||

|

|

||||||

|

#### `Inspector`

|

||||||

|

|

||||||

|

All Inspector methods are scoped to a queue, and the methods take `qname (string)` as the first argument.

|

||||||

|

`EnqueuedTask` is renamed to `PendingTask` and its corresponding methods.

|

||||||

|

`InProgressTask` is renamed to `ActiveTask` and its corresponding methods.

|

||||||

|

Command "Enqueue" is replaced by the verb "Run" (e.g. `EnqueueAllScheduledTasks` --> `RunAllScheduledTasks`)

|

||||||

|

|

||||||

|

#### `CLI`

|

||||||

|

|

||||||

|

CLI commands are restructured to use subcommands. Commands are organized into a few management commands:

|

||||||

|

To view details on any command, use `asynq help <command> <subcommand>`.

|

||||||

|

|

||||||

|

- `asynq stats`

|

||||||

|

- `asynq queue [ls inspect history rm pause unpause]`

|

||||||

|

- `asynq task [ls cancel delete kill run delete-all kill-all run-all]`

|

||||||

|

- `asynq server [ls]`

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

#### `RedisConnOpt`

|

||||||

|

|

||||||

|

- `RedisClusterClientOpt` is added to connect to Redis Cluster.

|

||||||

|

- `Username` field is added to all `RedisConnOpt` types in order to authenticate connection when Redis ACLs are used.

|

||||||

|

|

||||||

|

#### `Client`

|

||||||

|

|

||||||

|

- `ProcessIn(d time.Duration) Option` and `ProcessAt(t time.Time) Option` are added to replace `EnqueueIn` and `EnqueueAt` functionality.

|

||||||

|

|

||||||

|

#### `Inspector`

|

||||||

|

|

||||||

|

- `Queues() ([]string, error)` method is added to get all queue names.

|

||||||

|

- `ClusterKeySlot(qname string) (int64, error)` method is added to get queue's hash slot in Redis cluster.

|

||||||

|

- `ClusterNodes(qname string) ([]ClusterNode, error)` method is added to get a list of Redis cluster nodes for the given queue.

|

||||||

|

- `Close() error` method is added to close connection with redis.

|

||||||

|

|

||||||

|

### `Handler`

|

||||||

|

|

||||||

|

- `GetQueueName(ctx context.Context) (string, bool)` helper is added to extract queue name from a context.

|

||||||

|

|

||||||

|

## [0.11.0] - 2020-07-28

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- `Inspector` type was added to monitor and mutate state of queues and tasks.

|

||||||

|

- `HealthCheckFunc` and `HealthCheckInterval` fields were added to `Config` to allow user to specify a callback

|

||||||

|

function to check for broker connection.

|

||||||

|

|

||||||

|

## [0.10.0] - 2020-07-06

|

||||||

|

|

||||||

|

### Changed

|

||||||

|

|

||||||

|

- All tasks now requires timeout or deadline. By default, timeout is set to 30 mins.

|

||||||

|

- Tasks that exceed its deadline are automatically retried.

|

||||||

|

- Encoding schema for task message has changed. Please install the latest CLI and run `migrate` command if

|

||||||

|

you have tasks enqueued with the previous version of asynq.

|

||||||

|

- API of `(*Client).Enqueue`, `(*Client).EnqueueIn`, and `(*Client).EnqueueAt` has changed to return a `*Result`.

|

||||||

|

- API of `ErrorHandler` has changed. It now takes context as the first argument and removed `retried`, `maxRetry` from the argument list.

|

||||||

|

Use `GetRetryCount` and/or `GetMaxRetry` to get the count values.

|

||||||

|

|

||||||

|

## [0.9.4] - 2020-06-13

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fixes issue of same tasks processed by more than one worker (https://github.com/hibiken/asynq/issues/90).

|

||||||

|

|

||||||

|

## [0.9.3] - 2020-06-12

|

||||||

|

|

||||||

|

### Fixed

|

||||||

|

|

||||||

|

- Fixes the JSON number overflow issue (https://github.com/hibiken/asynq/issues/166).

|

||||||

|

|

||||||

|

## [0.9.2] - 2020-06-08

|

||||||

|

|

||||||

|

### Added

|

||||||

|

|

||||||

|

- The `pause` and `unpause` commands were added to the CLI. See README for the CLI for details.

|

||||||

|

|

||||||

## [0.9.1] - 2020-05-29

|

## [0.9.1] - 2020-05-29

|

||||||

|

|

||||||

### Added

|

### Added

|

||||||

|

|||||||

128

CODE_OF_CONDUCT.md

Normal file

128

CODE_OF_CONDUCT.md

Normal file

@@ -0,0 +1,128 @@

|

|||||||

|

# Contributor Covenant Code of Conduct

|

||||||

|

|

||||||

|

## Our Pledge

|

||||||

|

|

||||||

|

We as members, contributors, and leaders pledge to make participation in our

|

||||||

|

community a harassment-free experience for everyone, regardless of age, body

|

||||||

|

size, visible or invisible disability, ethnicity, sex characteristics, gender

|

||||||

|

identity and expression, level of experience, education, socio-economic status,

|

||||||

|

nationality, personal appearance, race, religion, or sexual identity

|

||||||

|

and orientation.

|

||||||

|

|

||||||

|

We pledge to act and interact in ways that contribute to an open, welcoming,

|

||||||

|

diverse, inclusive, and healthy community.

|

||||||

|

|

||||||

|

## Our Standards

|

||||||

|

|

||||||

|

Examples of behavior that contributes to a positive environment for our

|

||||||

|

community include:

|

||||||

|

|

||||||

|

* Demonstrating empathy and kindness toward other people

|

||||||

|

* Being respectful of differing opinions, viewpoints, and experiences

|

||||||

|

* Giving and gracefully accepting constructive feedback

|

||||||

|

* Accepting responsibility and apologizing to those affected by our mistakes,

|

||||||

|

and learning from the experience

|

||||||

|

* Focusing on what is best not just for us as individuals, but for the

|

||||||

|

overall community

|

||||||

|

|

||||||

|

Examples of unacceptable behavior include:

|

||||||

|

|

||||||

|

* The use of sexualized language or imagery, and sexual attention or

|

||||||

|

advances of any kind

|

||||||

|

* Trolling, insulting or derogatory comments, and personal or political attacks

|

||||||

|

* Public or private harassment

|

||||||

|

* Publishing others' private information, such as a physical or email

|

||||||

|

address, without their explicit permission

|

||||||

|

* Other conduct which could reasonably be considered inappropriate in a

|

||||||

|

professional setting

|

||||||

|

|

||||||

|

## Enforcement Responsibilities

|

||||||

|

|

||||||

|

Community leaders are responsible for clarifying and enforcing our standards of

|

||||||

|

acceptable behavior and will take appropriate and fair corrective action in

|

||||||

|

response to any behavior that they deem inappropriate, threatening, offensive,

|

||||||

|

or harmful.

|

||||||

|

|

||||||

|

Community leaders have the right and responsibility to remove, edit, or reject

|

||||||

|

comments, commits, code, wiki edits, issues, and other contributions that are

|

||||||

|

not aligned to this Code of Conduct, and will communicate reasons for moderation

|

||||||

|

decisions when appropriate.

|

||||||

|

|

||||||

|

## Scope

|

||||||

|

|

||||||

|

This Code of Conduct applies within all community spaces, and also applies when

|

||||||

|

an individual is officially representing the community in public spaces.

|

||||||

|

Examples of representing our community include using an official e-mail address,

|

||||||

|

posting via an official social media account, or acting as an appointed

|

||||||

|

representative at an online or offline event.

|

||||||

|

|

||||||

|

## Enforcement

|

||||||

|

|

||||||

|

Instances of abusive, harassing, or otherwise unacceptable behavior may be

|

||||||

|

reported to the community leaders responsible for enforcement at

|

||||||

|

ken.hibino7@gmail.com.

|

||||||

|

All complaints will be reviewed and investigated promptly and fairly.

|

||||||

|

|

||||||

|

All community leaders are obligated to respect the privacy and security of the

|

||||||

|

reporter of any incident.

|

||||||

|

|

||||||

|

## Enforcement Guidelines

|

||||||

|

|

||||||

|

Community leaders will follow these Community Impact Guidelines in determining

|

||||||

|

the consequences for any action they deem in violation of this Code of Conduct:

|

||||||

|

|

||||||

|

### 1. Correction

|

||||||

|

|

||||||

|

**Community Impact**: Use of inappropriate language or other behavior deemed

|

||||||

|

unprofessional or unwelcome in the community.

|

||||||

|

|

||||||

|

**Consequence**: A private, written warning from community leaders, providing

|

||||||

|

clarity around the nature of the violation and an explanation of why the

|

||||||

|

behavior was inappropriate. A public apology may be requested.

|

||||||

|

|

||||||

|

### 2. Warning

|

||||||

|

|

||||||

|

**Community Impact**: A violation through a single incident or series

|

||||||

|

of actions.

|

||||||

|

|

||||||

|

**Consequence**: A warning with consequences for continued behavior. No

|

||||||

|

interaction with the people involved, including unsolicited interaction with

|

||||||

|

those enforcing the Code of Conduct, for a specified period of time. This

|

||||||

|

includes avoiding interactions in community spaces as well as external channels

|

||||||

|

like social media. Violating these terms may lead to a temporary or

|

||||||

|

permanent ban.

|

||||||

|

|

||||||

|

### 3. Temporary Ban

|

||||||

|

|

||||||

|

**Community Impact**: A serious violation of community standards, including

|

||||||

|

sustained inappropriate behavior.

|

||||||

|

|

||||||

|

**Consequence**: A temporary ban from any sort of interaction or public

|

||||||

|

communication with the community for a specified period of time. No public or

|

||||||

|

private interaction with the people involved, including unsolicited interaction

|

||||||

|

with those enforcing the Code of Conduct, is allowed during this period.

|

||||||

|

Violating these terms may lead to a permanent ban.

|

||||||

|

|

||||||

|

### 4. Permanent Ban

|

||||||

|

|

||||||

|

**Community Impact**: Demonstrating a pattern of violation of community

|

||||||

|

standards, including sustained inappropriate behavior, harassment of an

|

||||||

|

individual, or aggression toward or disparagement of classes of individuals.

|

||||||

|

|

||||||

|

**Consequence**: A permanent ban from any sort of public interaction within

|

||||||

|

the community.

|

||||||

|

|

||||||

|

## Attribution

|

||||||

|

|

||||||

|

This Code of Conduct is adapted from the [Contributor Covenant][homepage],

|

||||||

|

version 2.0, available at

|

||||||

|

https://www.contributor-covenant.org/version/2/0/code_of_conduct.html.

|

||||||

|

|

||||||

|

Community Impact Guidelines were inspired by [Mozilla's code of conduct

|

||||||

|

enforcement ladder](https://github.com/mozilla/diversity).

|

||||||

|

|

||||||

|

[homepage]: https://www.contributor-covenant.org

|

||||||

|

|

||||||

|

For answers to common questions about this code of conduct, see the FAQ at

|

||||||

|

https://www.contributor-covenant.org/faq. Translations are available at

|

||||||

|

https://www.contributor-covenant.org/translations.

|

||||||

@@ -45,6 +45,7 @@ Thank you! We'll try to respond as quickly as possible.

|

|||||||

6. Create a new pull request

|

6. Create a new pull request

|

||||||

|

|

||||||

Please try to keep your pull request focused in scope and avoid including unrelated commits.

|

Please try to keep your pull request focused in scope and avoid including unrelated commits.

|

||||||

|

Please run tests against redis cluster locally with `--redis_cluster` flag to ensure that code works for Redis cluster. TODO: Run tests using Redis cluster on CI.

|

||||||

|

|

||||||

After you have submitted your pull request, we'll try to get back to you as soon as possible. We may suggest some changes or improvements.

|

After you have submitted your pull request, we'll try to get back to you as soon as possible. We may suggest some changes or improvements.

|

||||||

|

|

||||||

|

|||||||

7

Makefile

Normal file

7

Makefile

Normal file

@@ -0,0 +1,7 @@

|

|||||||

|

ROOT_DIR:=$(shell dirname $(realpath $(firstword $(MAKEFILE_LIST))))

|

||||||

|

|

||||||

|

proto: internal/proto/asynq.proto

|

||||||

|

protoc -I=$(ROOT_DIR)/internal/proto \

|

||||||

|

--go_out=$(ROOT_DIR)/internal/proto \

|

||||||

|

--go_opt=module=github.com/hibiken/asynq/internal/proto \

|

||||||

|

$(ROOT_DIR)/internal/proto/asynq.proto

|

||||||

256

README.md

256

README.md

@@ -1,86 +1,114 @@

|

|||||||

# Asynq

|

<img src="https://user-images.githubusercontent.com/11155743/114697792-ffbfa580-9d26-11eb-8e5b-33bef69476dc.png" alt="Asynq logo" width="360px" />

|

||||||

|

|

||||||

|

# Simple, reliable & efficient distributed task queue in Go

|

||||||

|

|

||||||

[](https://travis-ci.com/hibiken/asynq)

|

|

||||||

[](https://opensource.org/licenses/MIT)

|

|

||||||

[](https://goreportcard.com/report/github.com/hibiken/asynq)

|

|

||||||

[](https://godoc.org/github.com/hibiken/asynq)

|

[](https://godoc.org/github.com/hibiken/asynq)

|

||||||

|

[](https://goreportcard.com/report/github.com/hibiken/asynq)

|

||||||

|

|

||||||

|

[](https://opensource.org/licenses/MIT)

|

||||||

[](https://gitter.im/go-asynq/community)

|

[](https://gitter.im/go-asynq/community)

|

||||||

[](https://codecov.io/gh/hibiken/asynq)

|

|

||||||

|

|

||||||

## Overview

|

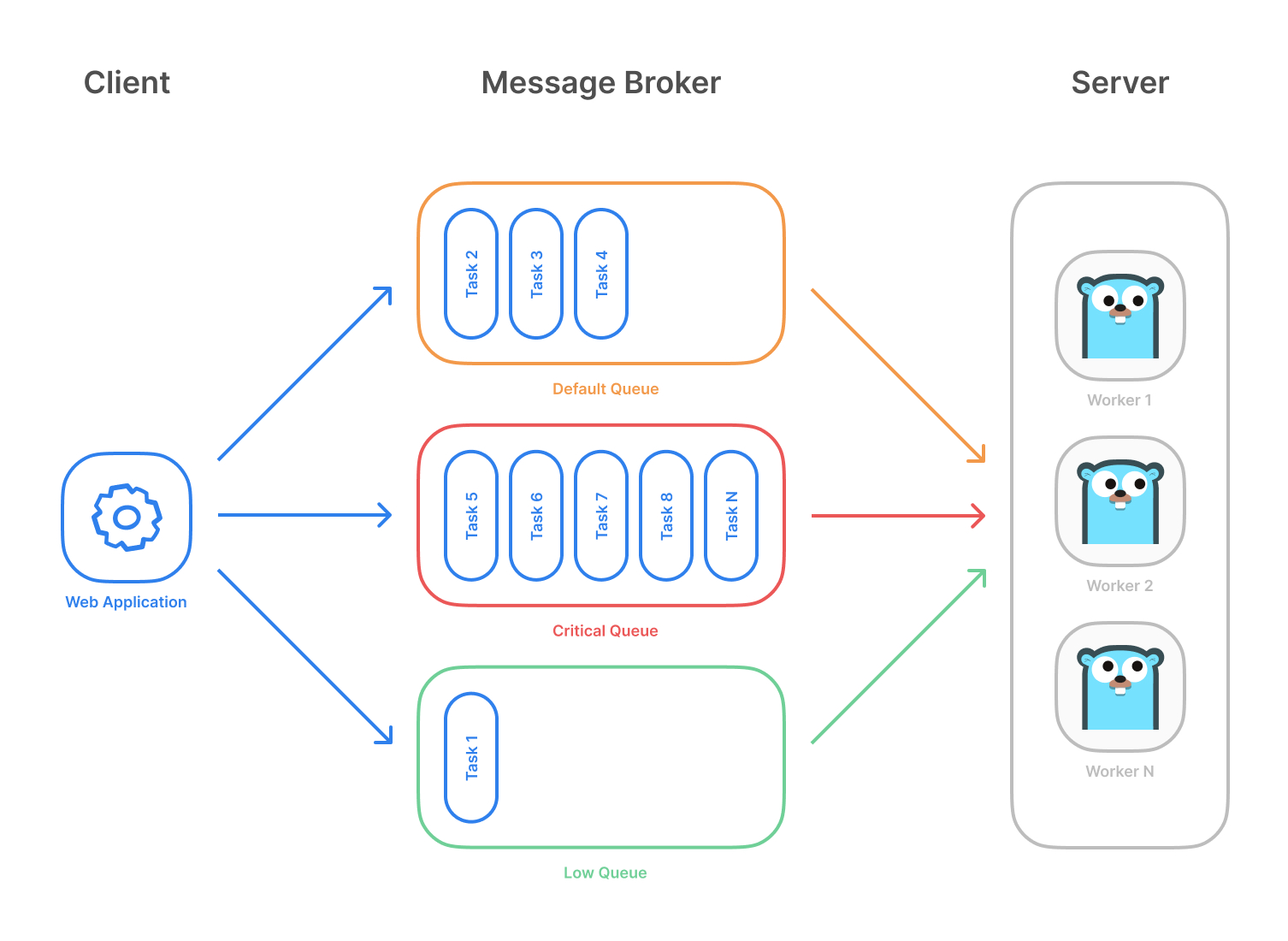

Asynq is a Go library for queueing tasks and processing them asynchronously with workers. It's backed by [Redis](https://redis.io/) and is designed to be scalable yet easy to get started.

|

||||||

|

|

||||||

Asynq is a Go library for queueing tasks and processing them in the background with workers. It is backed by Redis and it is designed to have a low barrier to entry. It should be integrated in your web stack easily.

|

|

||||||

|

|

||||||

Highlevel overview of how Asynq works:

|

Highlevel overview of how Asynq works:

|

||||||

|

|

||||||

- Client puts task on a queue

|

- Client puts tasks on a queue

|

||||||

- Server pulls task off queues and starts a worker goroutine for each task

|

- Server pulls tasks off queues and starts a worker goroutine for each task

|

||||||

- Tasks are processed concurrently by multiple workers

|

- Tasks are processed concurrently by multiple workers

|

||||||

|

|

||||||

Task queues are used as a mechanism to distribute work across multiple machines.

|

Task queues are used as a mechanism to distribute work across multiple machines. A system can consist of multiple worker servers and brokers, giving way to high availability and horizontal scaling.

|

||||||

A system can consist of multiple worker servers and brokers, giving way to high availability and horizontal scaling.

|

|

||||||

|

|

||||||

|

**Example use case**

|

||||||

|

|

||||||

## Stability and Compatibility

|

|

||||||

|

|

||||||

**Important Note**: Current major version is zero (v0.x.x) to accomodate rapid development and fast iteration while getting early feedback from users (Feedback on APIs are appreciated!). The public API could change without a major version update before v1.0.0 release.

|

|

||||||

|

|

||||||

**Status**: The library is currently undergoing heavy development with frequent, breaking API changes.

|

|

||||||

|

|

||||||

## Features

|

## Features

|

||||||

|

|

||||||

- Guaranteed [at least one execution](https://www.cloudcomputingpatterns.org/at_least_once_delivery/) of a task

|

- Guaranteed [at least one execution](https://www.cloudcomputingpatterns.org/at_least_once_delivery/) of a task

|

||||||

- Scheduling of tasks

|

- Scheduling of tasks

|

||||||

- Durability since tasks are written to Redis

|

|

||||||

- [Retries](https://github.com/hibiken/asynq/wiki/Task-Retry) of failed tasks

|

- [Retries](https://github.com/hibiken/asynq/wiki/Task-Retry) of failed tasks

|

||||||

- [Weighted priority queues](https://github.com/hibiken/asynq/wiki/Priority-Queues#weighted-priority-queues)

|

- Automatic recovery of tasks in the event of a worker crash

|

||||||

- [Strict priority queues](https://github.com/hibiken/asynq/wiki/Priority-Queues#strict-priority-queues)

|

- [Weighted priority queues](https://github.com/hibiken/asynq/wiki/Queue-Priority#weighted-priority)

|

||||||

|

- [Strict priority queues](https://github.com/hibiken/asynq/wiki/Queue-Priority#strict-priority)

|

||||||

- Low latency to add a task since writes are fast in Redis

|

- Low latency to add a task since writes are fast in Redis

|

||||||

- De-duplication of tasks using [unique option](https://github.com/hibiken/asynq/wiki/Unique-Tasks)

|

- De-duplication of tasks using [unique option](https://github.com/hibiken/asynq/wiki/Unique-Tasks)

|

||||||

- Allow [timeout and deadline per task](https://github.com/hibiken/asynq/wiki/Task-Timeout-and-Cancelation)

|

- Allow [timeout and deadline per task](https://github.com/hibiken/asynq/wiki/Task-Timeout-and-Cancelation)

|

||||||

- [Flexible handler interface with support for middlewares](https://github.com/hibiken/asynq/wiki/Handler-Deep-Dive)

|

- [Flexible handler interface with support for middlewares](https://github.com/hibiken/asynq/wiki/Handler-Deep-Dive)

|

||||||

- [Support Redis Sentinels](https://github.com/hibiken/asynq/wiki/Automatic-Failover) for HA

|

- [Ability to pause queue](/tools/asynq/README.md#pause) to stop processing tasks from the queue

|

||||||

|

- [Periodic Tasks](https://github.com/hibiken/asynq/wiki/Periodic-Tasks)

|

||||||

|

- [Support Redis Cluster](https://github.com/hibiken/asynq/wiki/Redis-Cluster) for automatic sharding and high availability

|

||||||

|

- [Support Redis Sentinels](https://github.com/hibiken/asynq/wiki/Automatic-Failover) for high availability

|

||||||

|

- Integration with [Prometheus](https://prometheus.io/) to collect and visualize queue metrics

|

||||||

|

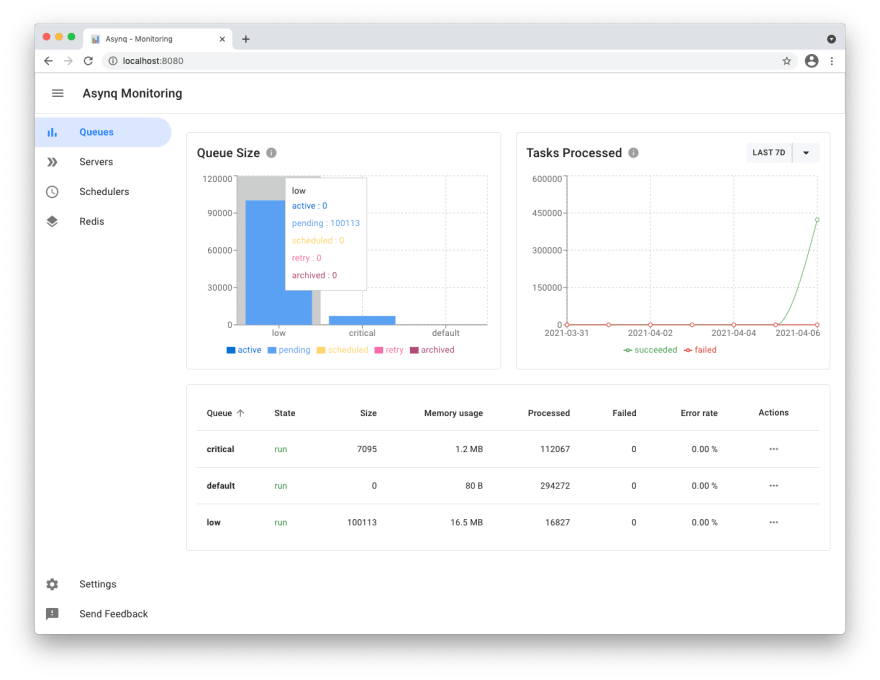

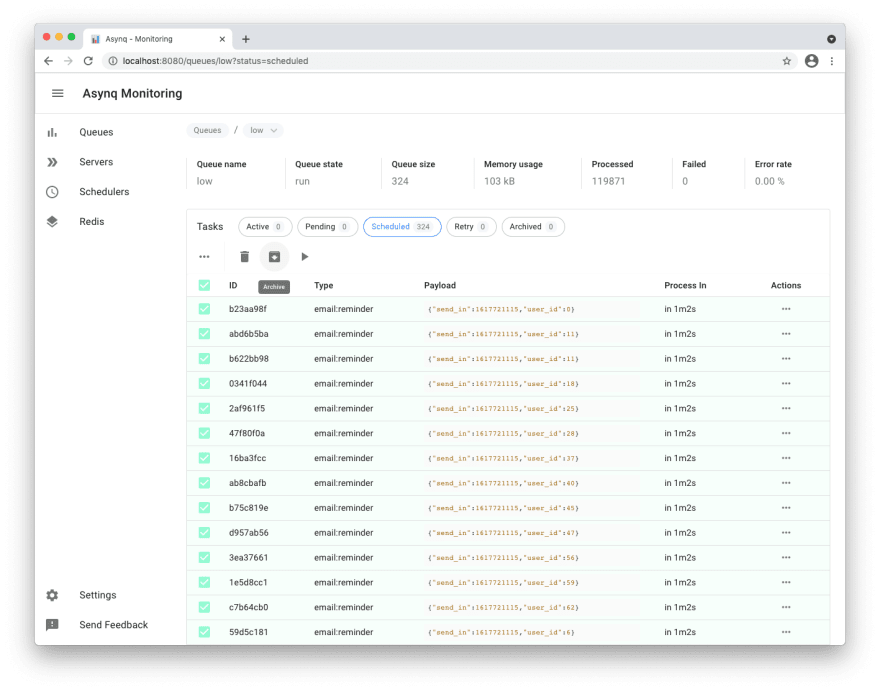

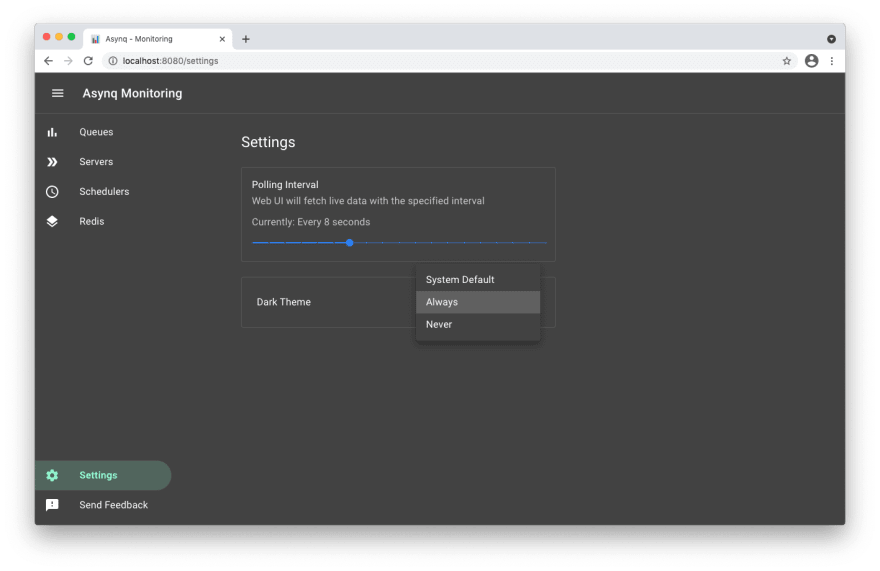

- [Web UI](#web-ui) to inspect and remote-control queues and tasks

|

||||||

- [CLI](#command-line-tool) to inspect and remote-control queues and tasks

|

- [CLI](#command-line-tool) to inspect and remote-control queues and tasks

|

||||||

|

|

||||||

|

## Stability and Compatibility

|

||||||

|

|

||||||

|

**Status**: The library is currently undergoing **heavy development** with frequent, breaking API changes.

|

||||||

|

|

||||||

|

> ☝️ **Important Note**: Current major version is zero (`v0.x.x`) to accomodate rapid development and fast iteration while getting early feedback from users (_feedback on APIs are appreciated!_). The public API could change without a major version update before `v1.0.0` release.

|

||||||

|

|

||||||

## Quickstart

|

## Quickstart

|

||||||

|

|

||||||

First, make sure you are running a Redis server locally.

|

Make sure you have Go installed ([download](https://golang.org/dl/)). Version `1.14` or higher is required.

|

||||||

|

|

||||||

|

Initialize your project by creating a folder and then running `go mod init github.com/your/repo` ([learn more](https://blog.golang.org/using-go-modules)) inside the folder. Then install Asynq library with the [`go get`](https://golang.org/cmd/go/#hdr-Add_dependencies_to_current_module_and_install_them) command:

|

||||||

|

|

||||||

```sh

|

```sh

|

||||||

$ redis-server

|

go get -u github.com/hibiken/asynq

|

||||||

```

|

```

|

||||||

|

|

||||||

|

Make sure you're running a Redis server locally or from a [Docker](https://hub.docker.com/_/redis) container. Version `4.0` or higher is required.

|

||||||

|

|

||||||

Next, write a package that encapsulates task creation and task handling.

|

Next, write a package that encapsulates task creation and task handling.

|

||||||

|

|

||||||

```go

|

```go

|

||||||

package tasks

|

package tasks

|

||||||

|

|

||||||

import (

|

import (

|

||||||

|

"context"

|

||||||

|

"encoding/json"

|

||||||

"fmt"

|

"fmt"

|

||||||

|

"log"

|

||||||

|

"time"

|

||||||

"github.com/hibiken/asynq"

|

"github.com/hibiken/asynq"

|

||||||

)

|

)

|

||||||

|

|

||||||

// A list of task types.

|

// A list of task types.

|

||||||

const (

|

const (

|

||||||

EmailDelivery = "email:deliver"

|

TypeEmailDelivery = "email:deliver"

|

||||||

ImageProcessing = "image:process"

|

TypeImageResize = "image:resize"

|

||||||

)

|

)

|

||||||

|

|

||||||

|

type EmailDeliveryPayload struct {

|

||||||

|

UserID int

|

||||||

|

TemplateID string

|

||||||

|

}

|

||||||

|

|

||||||

|

type ImageResizePayload struct {

|

||||||

|

SourceURL string

|

||||||

|

}

|

||||||

|

|

||||||

//----------------------------------------------

|

//----------------------------------------------

|

||||||

// Write a function NewXXXTask to create a task.

|

// Write a function NewXXXTask to create a task.

|

||||||

// A task consists of a type and a payload.

|

// A task consists of a type and a payload.

|

||||||

//----------------------------------------------

|

//----------------------------------------------

|

||||||

|

|

||||||

func NewEmailDeliveryTask(userID int, tmplID string) *asynq.Task {

|

func NewEmailDeliveryTask(userID int, tmplID string) (*asynq.Task, error) {

|

||||||

payload := map[string]interface{}{"user_id": userID, "template_id": tmplID}

|

payload, err := json.Marshal(EmailDeliveryPayload{UserID: userID, TemplateID: tmplID})

|

||||||

return asynq.NewTask(EmailDelivery, payload)

|

if err != nil {

|

||||||

|

return nil, err

|

||||||

|

}

|

||||||

|

return asynq.NewTask(TypeEmailDelivery, payload), nil

|

||||||

}

|

}

|

||||||

|

|

||||||

func NewImageProcessingTask(src, dst string) *asynq.Task {

|

func NewImageResizeTask(src string) (*asynq.Task, error) {

|

||||||

payload := map[string]interface{}{"src": src, "dst": dst}

|

payload, err := json.Marshal(ImageResizePayload{SourceURL: src})

|

||||||

return asynq.NewTask(ImageProcessing, payload)

|

if err != nil {

|

||||||

|

return nil, err

|

||||||

|

}

|

||||||

|

// task options can be passed to NewTask, which can be overridden at enqueue time.

|

||||||

|

return asynq.NewTask(TypeImageResize, payload, asynq.MaxRetry(5), asynq.Timeout(20 * time.Minute)), nil

|

||||||

}

|

}

|

||||||

|

|

||||||

//---------------------------------------------------------------

|

//---------------------------------------------------------------

|

||||||

@@ -92,51 +120,42 @@ func NewImageProcessingTask(src, dst string) *asynq.Task {

|

|||||||

//---------------------------------------------------------------

|

//---------------------------------------------------------------

|

||||||

|

|

||||||

func HandleEmailDeliveryTask(ctx context.Context, t *asynq.Task) error {

|

func HandleEmailDeliveryTask(ctx context.Context, t *asynq.Task) error {

|

||||||

userID, err := t.Payload.GetInt("user_id")

|

var p EmailDeliveryPayload

|

||||||

if err != nil {

|

if err := json.Unmarshal(t.Payload(), &p); err != nil {

|

||||||

return err

|

return fmt.Errorf("json.Unmarshal failed: %v: %w", err, asynq.SkipRetry)

|

||||||

}

|

}

|

||||||

tmplID, err := t.Payload.GetString("template_id")

|

log.Printf("Sending Email to User: user_id=%d, template_id=%s", p.UserID, p.TemplateID)

|

||||||

if err != nil {

|

// Email delivery code ...

|

||||||

return err

|

|

||||||

}

|

|

||||||

fmt.Printf("Send Email to User: user_id = %d, template_id = %s\n", userID, tmplID)

|

|

||||||

// Email delivery logic ...

|

|

||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

// ImageProcessor implements asynq.Handler interface.

|

// ImageProcessor implements asynq.Handler interface.

|

||||||

type ImageProcesser struct {

|

type ImageProcessor struct {

|

||||||

// ... fields for struct

|

// ... fields for struct

|

||||||

}

|

}

|

||||||

|

|

||||||

func (p *ImageProcessor) ProcessTask(ctx context.Context, t *asynq.Task) error {

|

func (processor *ImageProcessor) ProcessTask(ctx context.Context, t *asynq.Task) error {

|

||||||

src, err := t.Payload.GetString("src")

|

var p ImageResizePayload

|

||||||

if err != nil {

|

if err := json.Unmarshal(t.Payload(), &p); err != nil {

|

||||||

return err

|

return fmt.Errorf("json.Unmarshal failed: %v: %w", err, asynq.SkipRetry)

|

||||||

}

|

}

|

||||||

dst, err := t.Payload.GetString("dst")

|

log.Printf("Resizing image: src=%s", p.SourceURL)

|

||||||

if err != nil {

|

// Image resizing code ...

|

||||||

return err

|

|

||||||

}

|

|

||||||

fmt.Printf("Process image: src = %s, dst = %s\n", src, dst)

|

|

||||||

// Image processing logic ...

|

|

||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

func NewImageProcessor() *ImageProcessor {

|

func NewImageProcessor() *ImageProcessor {

|

||||||

// ... return an instance

|

return &ImageProcessor{}

|

||||||

}

|

}

|

||||||

```

|

```

|

||||||

|

|

||||||

In your web application code, import the above package and use [`Client`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Client) to put tasks on the queue.

|

In your application code, import the above package and use [`Client`](https://pkg.go.dev/github.com/hibiken/asynq?tab=doc#Client) to put tasks on queues.

|

||||||

A task will be processed asynchronously by a background worker as soon as the task gets enqueued.

|

|

||||||

Scheduled tasks will be stored in Redis and will be enqueued at the specified time.

|

|

||||||

|

|

||||||

```go

|

```go

|

||||||

package main

|

package main

|

||||||

|

|

||||||

import (

|

import (

|

||||||

|

"log"

|

||||||

"time"

|

"time"

|

||||||

|

|

||||||

"github.com/hibiken/asynq"

|

"github.com/hibiken/asynq"

|

||||||

@@ -146,64 +165,57 @@ import (

|

|||||||

const redisAddr = "127.0.0.1:6379"

|

const redisAddr = "127.0.0.1:6379"

|

||||||

|

|

||||||

func main() {

|

func main() {

|

||||||

r := asynq.RedisClientOpt{Addr: redisAddr}

|

client := asynq.NewClient(asynq.RedisClientOpt{Addr: redisAddr})

|

||||||

c := asynq.NewClient(r)

|